Published online Apr 18, 2026. doi: 10.5312/wjo.v17.i4.113710

Revised: October 2, 2025

Accepted: January 14, 2026

Published online: April 18, 2026

Processing time: 221 Days and 10.1 Hours

Artificial intelligence (AI) shows promise in musculoskeletal imaging, particularly in detecting fractures in emergency situations. Nonetheless, doubts persist re

To evaluate the AI fracture detection system's diagnostic performance vs radio

We retrospectively reviewed radiographic examinations over 3 months interp

The AI algorithm achieved an accuracy of 94.0%, a sensitivity of 89.9%, a specificity of 96.1%, a negative predictive value of 96.4%, and a positive predictive value of 89.8%. Out of 563 fractures identified in radiology reports, 54 (9.6%) were missed by AI. Most false negatives (92.6%) were due to subtle or minimally displaced fractures, primarily at the wrist and ankle. False positives (n = 58) were mainly caused by degenerative changes, growth plate variations, or healed fractures (91.4%). FN cases had greater clinical impact, often requiring fracture clinic follow-up (61.1%), additional imaging (14.8%), or hospital admission (5.6%), whereas FP cases mainly led to unnecessary follow-up or imaging.

AI demonstrated strong diagnostic performance, comparable to that in the published literature, but remains limited in detecting subtle and anatomically complex fractures. FN errors pose risks of delayed or missed treatment, while FP errors increase resource utilisation. AI should be integrated as a triage and decision-support tool with radiologist oversight, and future refinement should target wrist and ankle injuries and better differentiation of chronic from acute findings.

Core Tip: Artificial intelligence (AI) is increasingly utilised in musculoskeletal imaging; however, its clinical utility in fracture detection remains a subject of debate. In this retrospective study aimed at assessing diagnostic accuracy, we evaluated an AI-based fracture detection system in comparison with radiologist reports across over 2000 limb radiographs. The AI demonstrated high accuracy but failed to identify approximately 10% of fractures, primarily subtle or minimally displaced injuries, and frequently over diagnosed old or degenerative changes. False negatives posed a greater clinical risk than false positives, underscoring the importance of implementing AI as a triage and decision-support tool rather than a substitute for radiologists.

- Citation: Al Hajaj SW, Soliman K, Zafar M, Garnham C, Al Hajaj D, Elshafie O, Alsswah A, Elwan MH. From subtle breaks to missed diagnoses: Real-world evaluation of an artificial intelligence fracture detection tool. World J Orthop 2026; 17(4): 113710

- URL: https://www.wjgnet.com/2218-5836/full/v17/i4/113710.htm

- DOI: https://dx.doi.org/10.5312/wjo.v17.i4.113710

Limb fractures are a common and clinically significant concern worldwide, bearing considerable social and economic consequences. Research suggests a higher incidence of fractures in the lower limbs relative to the upper limbs, primarily resulting from high-energy traumas such as vehicular accidents[1,2]. These fractures occur most frequently in young males and older females, showing a bimodal pattern. Young males typically sustain these injuries from high-impact activities, while older females are more likely to be affected by falls[3].

Radiologists play an essential role in the diagnostic process, especially in trauma and emergency settings, where their expertise is critical for accurately and quickly identifying fractures and other injuries. Nevertheless, the practice of radiology faces numerous challenges, including variability, fatigue, and human error, which can result in missed or delayed diagnoses with serious implications for patient care. Variability in radiological interpretation constitutes a significant concern, as it introduces variability into the diagnostic process, potentially causing errors that hinder accurate diagnosis. This variability may stem from individual differences in judgment and is also influenced by external factors such as fatigue and environmental conditions, which can impact a radiologist's performance and decision-making capabilities[4,5]. Fatigue contributes to radiological errors because it hampers a radiologist's vigilance and ability to identify subtle fractures, particularly in complex anatomical regions[6]. Human error in radiology is commonly divided into perceptual errors-where abnormalities go unnoticed-and cognitive errors-where the importance of detected ab

The advent of artificial intelligence (AI) in the field of medical imaging, specifically in the detection of fractures, has been revolutionary, providing substantial improvements in diagnostic precision and efficiency. AI systems, particularly those utilising deep learning and convolutional neural networks, have exhibited superior capabilities in detecting bone fractures across diverse anatomical regions and imaging techniques[8,9]. These models have demonstrated high sen

Research on AI models for detecting limb fractures and identifying missed fractures (false positives) compared to radiologists is crucial for enhancing the accuracy and efficiency of trauma diagnosis. This research is especially important because missed fractures continue to be a major source of diagnostic errors, leading to delayed treatment and higher healthcare costs[10]. Studies show AI sensitivities over 90%, with specificities similar to clinicians, highlighting AI’s potential to enhance radiological assessments[11]. Despite advances, challenges persist in accurately identifying subtle or hidden fractures and reducing false positives, which could result in unnecessary treatments[12,13].

Conflicting evidence exists regarding whether AI surpasses clinicians in performance or functions most effectively as an adjunct. Some studies demonstrate AI's superior sensitivity; however, they also reveal lower specificity. Conversely, other research highlights improved clinician performance when assisted by AI[14,15].

This study aims to evaluate the diagnostic accuracy of AI models in detecting limb fractures and missed fractures compared to human radiologists. We evaluated the performance of our in-house AI model in detecting fractures on limb X-rays, comparing its results with those in official radiology reports. Additionally, this review clarifies AI's diagnostic capabilities, examines factors that influence performance variability, and provides guidance for future clinical applications. It also addresses the knowledge gap on AI's comparative effectiveness and practical utility in medical diagnostics.

We conducted a retrospective analysis of patients presenting to the emergency department (ED) with suspected fractures at Kettering General Hospital, a district general hospital in the United Kingdom. We followed the STARD checklist in conducting our study. The objective was to evaluate whether AI (RBfracture, Version v.2.1.0, Radiobotics, Denmark) can function as a dependable and efficient diagnostic support tool within emergency care environments. Data were obtained from the hospital’s picture archiving and communication system, which encompasses all radiological images and reports for patients with suspected fractures. The data collection spanned three months, facilitating a direct comparison of diagnostic performance and workflow efficiency. The timeframe for data collection extended from January 1, 2024, to March 1, 2024. All pertinent radiological findings, AI outputs, and clinical notes related to each patient during this period were extracted for analysis. The inclusion criteria comprised all patients who underwent X-ray imaging with suspected limb fractures. Exclusion criteria included incomplete records, non-limb radiographs or cases where neither the AI system nor the radiologist provided a diagnostic output. Radiology reports served as the reference standard for diagnosis in routine practice, allowing extensive retrospective evaluation of over 2000 cases. Although not infallible, all discordant cases were reviewed by fellowship-trained musculoskeletal radiologists to ensure accuracy, supporting reliable error classification and outcome analysis.

A total of 2054 radiographic examinations were assessed. In comparison with radiology reports serving as the reference standard, average age: Approximately 49.8 years, SD (age): Approximately 27 years, with percentage of female patients approximately 51.3%.

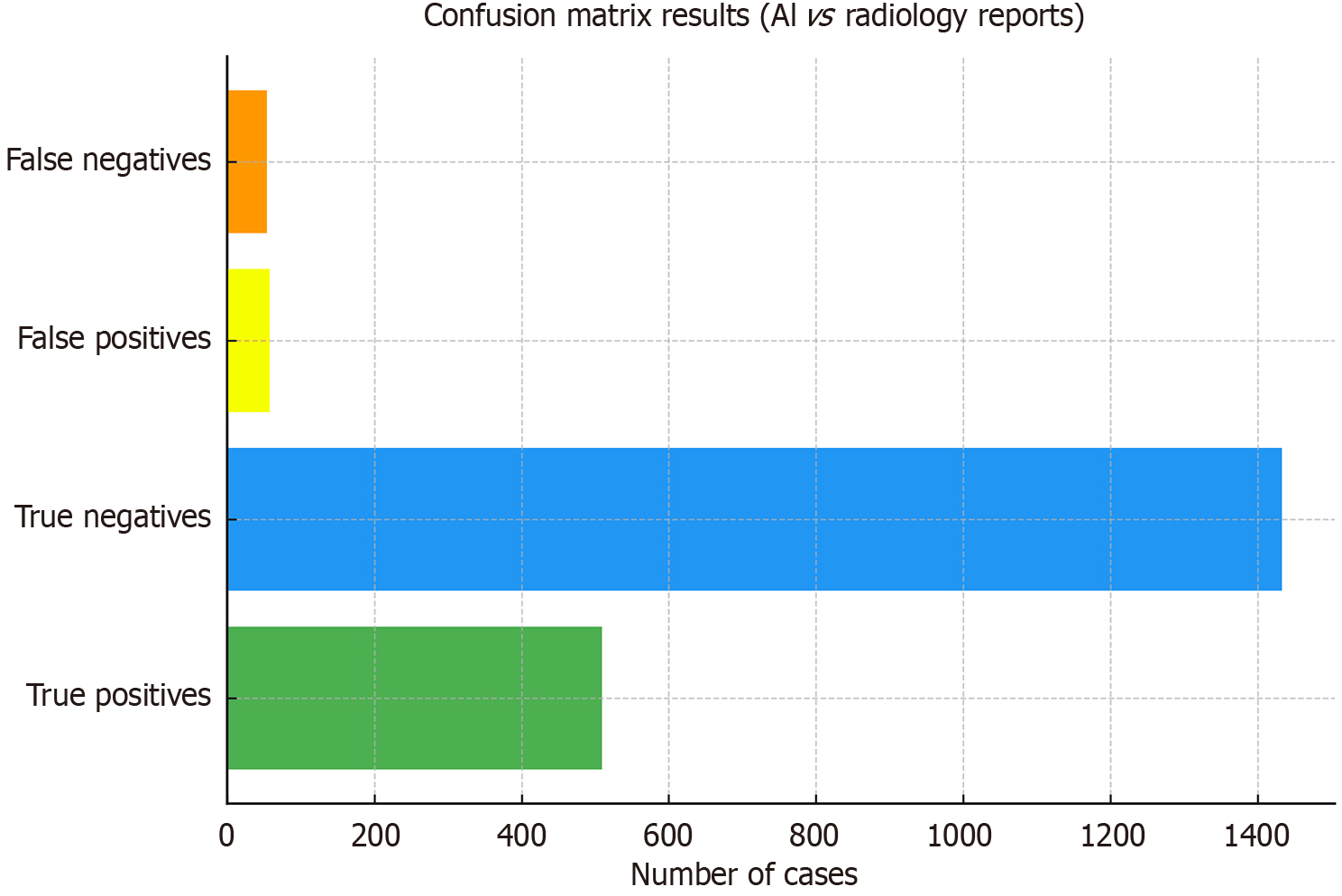

The data shows 509 true positives (TP), 1433 true negatives (TN), 58 false positives (FP), and 54 false-negatives (FN) (Figure 1). This results in an overall accuracy of 94.0%, with a sensitivity of 89.9%, a specificity of 96.1%, a negative predictive value of 96.4%, and a positive predictive value of 89.8%. Among the 563 fractures confirmed in radiology reports, the AI missed 54, which means a missed fracture rate of 9.6%.

Clinical notes and outcome data were available for all 112 misclassified cases, comprising 54 FNs and 58 FPs. The causes of these errors are detailed in Table 1. Most false negatives (92.6%, n = 50/54) were due to subtle or minimally displaced fractures. Isolated errors were caused by projectional artefacts (1.9%, n = 1/54), and a small portion (5.6%, n = 3/54) involved old or healed fractures misclassified as acute. Conversely, the majority of false positives (91.4%, n = 53/58) resulted from variations in the growth plate, degenerative changes, or prostheses. Old or healed fractures accounted for 6.9% (n = 4/58), and only one case (1.7%) involved the misclassification of a subtle fracture.

| Category | False positives | False negatives | Total errors |

| Old/healed fracture/variant | 4 (6.9) | 3 (5.6) | 7 (6.2) |

| Subtle/minimally displaced fracture | 1 (1.7) | 50 (92.6) | 51 (45.5) |

| Overlapping and projection artifact | 0 (0.0) | 1 (1.9) | 1 (0.9) |

| Growth plate/degenerative changes/prosthesis | 53 (91.4) | 0 (0.0) | 53 (47.3) |

| Total | 58 (100) | 54 (100) | 112 (100) |

The clinical outcomes of FP and FN cases are summarised in Table 2. False negatives were more clinically significant: The majority needed outpatient fracture clinic follow-up (61.1%, n = 33/54), with some requiring advanced imaging (14.8%, n = 8/54), ED discharge with conservative treatment (18.5%, n = 10/54), or hospital admission (5.6%, n = 3/54). False positives mainly represented a resource burden rather than direct harm, with most cases resulting in outpatient follow-up (53.4%, n = 31/58), additional imaging (13.8%, n = 8/58), conservative management or ED discharge (15.5%,

| Outcome category | False negatives | False positives | Total |

| Outpatient/fracture clinic follow-up | 33 (61.1) | 31 (53.4) | 64 (57.1) |

| CT/MRI performed | 8 (14.8) | 8 (13.8) | 16 (14.3) |

| ED discharge/conservative management | 10 (18.5) | 9 (15.5) | 19 (17.0) |

| Admitted | 3 (5.6) | 6 (10.3) | 9 (8.0) |

| Total | 54 (100) | 58 (100) | 112 (100) |

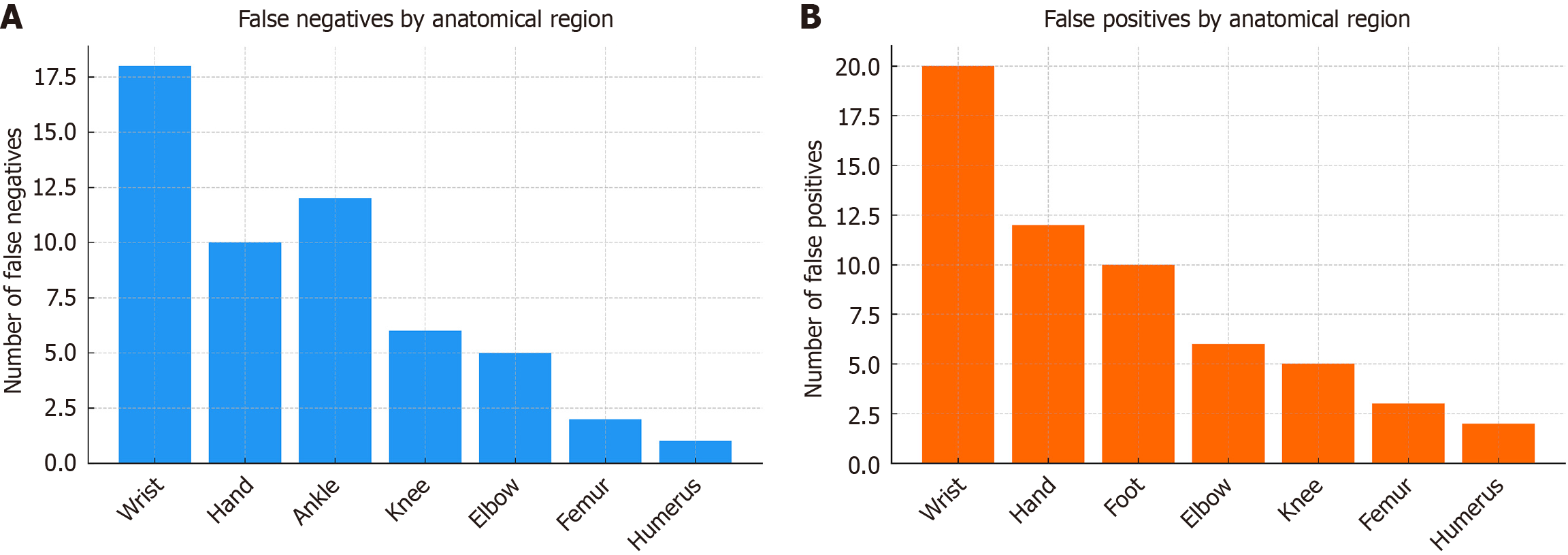

Anatomical stratification revealed clear patterns in AI errors. False negatives (Figure 2A) were most common in the wrist and hand, followed by the ankle and knee. These areas are complex and often have subtle or minimally displaced fractures, which can lead to missed diagnoses. Conversely, false positives (Figure 2B) primarily appeared in the wrist, hand, and foot, where degenerative changes, growth plate variations, and prosthetic materials were frequently misinterpreted as acute fractures. Larger cortical bones like the femur and humerus had fewer errors, likely because fractures in these bones are more obvious.

In this large real-world cohort, we evaluated an AI fracture detection tool against radiology reports in a dataset of over 2000 patients. The algorithm achieved a high accuracy of 94.0%, with a sensitivity of 89.9% and a specificity of 96.1%, comparable to other AI diagnostic systems in musculoskeletal imaging. The 9.6% missed fracture rate shows that, despite strong AI performance, limitations remain in clinical use. Our figures are comparable to those of previous systematic reviews of AI fracture detection, which reported pooled sensitivities of 91%-94% and specificities of 92% across ra

False negatives accounted for 54 cases (9.6% of all fractures), primarily resulting from subtle or minimally displaced injuries (92.6%), which most commonly affected the wrist and ankle. These missed cases often needed outpatient follow-up, advanced imaging, or hospital admission, highlighting their clinical significance. This pattern was previously identified in studies where AI accuracy significantly declined on “challenging” cases compared to routine ones[17]. This was consistent across other AI models, as several diagnostic studies and meta-analyses reported poorer performance in complex regions, such as the shoulder, compared to long bones, highlighting regional heterogeneity as a major source of error in pooled analyses[15,18,19].

An in-depth review revealed overlapping structures that obscure cortical disruptions behind other bones, making fractures less visible[15,18]. Additionally, there is a high degree of variability in normal anatomy[19]. Another factor is that fracture types common in these areas, such as avulsion, hairline, non-displaced, or occult fractures, are inherently more challenging to detect on radiographs, which reduces apparent sensitivity[20,21].

These characteristics align with human reader errors, where perceptual and technical challenges significantly influence missed diagnoses. Notably, a proportion of false negative cases necessitated surgical fixation, inpatient admission, or advanced imaging, underscoring their direct clinical significance. This observation reinforces the notion that AI should serve as a decision-support tool rather than a substitute for radiologists or emergency physicians.

False positives occurred in 58 cases, primarily due to degenerative changes, growth plate variations, or prosthetic material (91.4%), with fewer cases attributed to healed fractures. Though less serious clinically, these errors resulted in unnecessary follow-ups, extra imaging, and hospital stays.

Several primary studies reported frequent false positives and reduced specificity in specific anatomical regions[18,22]. Reports suggest that AI tends to overcall chronic abnormalities as acute[16]. Chronic degenerative changes, such as osteophytes, endplate collapse, subchondral sclerosis, and remodelling, can create cortical irregularities or lucencies that resemble acute fracture lines[22,23]. One study explicitly linked the presence of foreign material to decreased sensitivity in spine radiographs[22]. Commercial product reviews reported frequent false positives in real-world conditions with hardware and artefacts[18].

These patients frequently received unnecessary outpatient follow-up, advanced imaging, or hospital admission, indicating a resource burden rather than direct harm8. This pattern emphasises the need to include clinical context and patient history in AI models to reduce the over-identification of chronic findings.

Errors were primarily found in the wrist and ankle areas, and then by the elbow and knee. These regions are known for their diagnostic challenges due to their complex anatomy, overlapping tissues, and subtle injuries. Conversely, long bones, such as the femur and humerus, showed fewer errors, likely due to their larger cortical bone and more visible fractures. This pattern recommends customising the algorithm for small joints and peri-articular injuries to improve clinical effectiveness.

The dual nature of AI errors observed-clinically significant FN cases and resource-intensive FP cases-highlights the nuanced role of AI in ED workflows. When used appropriately, AI can serve as a safety net for overlooked fractures. Particularly during periods of high patient volume, this also facilitates the triage process for expedited review[24,25]. However, over-reliance without adequate human oversight poses risks of missed injuries and unnecessary investigations[26]. AI systems often struggle to communicate uncertainty, which is vital in clinical decisions, and are trained for specific tasks like fracture detection without recognising other relevant abnormalities[24]. The potential for AI to miss subtle or occult fractures, especially in complex areas or among patients with osteopenia, highlights the need for human oversight[20].

AI can lower workload and fatigue, but must be carefully managed to complement human expertise, not replace it[27]. AI can improve fracture detection but must be balanced with human oversight to minimise missed injuries and unnecessary tests[24,28]. Integrating AI outputs into multidisciplinary decision-making, while retaining the final authority for radiologists, constitutes a pragmatic approach. This method enhances clinical decision-making without undermining the essential human element in healthcare[29].

This study specifically examined the RBfracture system (version 2.1.0, Radiobotics, Denmark), a commercially available AI tool for detecting fractures on plain radiographs. By evaluating its performance in a real-world ED setting, our goal was not to generalise findings to all AI algorithms but to provide an independent assessment of one tool currently used in clinics. As a result, the findings highlight the strengths and limitations of RBfracture. While they may suggest potential challenges for AI-assisted fracture detection in general (e.g., handling subtle fractures or degenerative changes), they should not be directly applied to all fracture detection systems. Further research comparing multiple algorithms across various datasets is necessary to determine broader applicability.

Future work should focus on refining AI algorithms to address the errors identified in this study, particularly by improving sensitivity for subtle, minimally displaced fractures in the wrist and ankle. Using multi-view analysis, temporal comparison, and clinical data like age, injury mechanism, and comorbidities can reduce false negatives and positives. Training on larger, more diverse datasets that include challenging cases, such as peri-articular, occult, and chronic injuries, is essential for broader adoption. Prospective studies in real-time emergency settings are needed to evaluate AI for triage, workload management, and as a safety check for missed fractures. Research should also explore human-AI collaboration, assessing accuracy, clinical outcomes, efficiency, and AI acceptance by radiologists and emergency doctors.

This study presents various limitations. Radiology reports were utilised as the reference standard, mirroring real-world practice, though they may have introduced bias; nevertheless, fellowship-trained musculoskeletal radiologists reviewed discordant cases to improve the reliability of the findings. Only short-term outcomes were considered; the long-term consequences of FN or FP errors were not addressed. Furthermore, the investigation concentrated on a single commercial AI system within a retrospective framework, thereby restricting generalizability and hindering the evaluation of real-time clinical performance.

This large cohort demonstrated that the AI fracture detection system had high accuracy, consistent with existing studies. However, approximately 10% of fractures were missed, primarily due to subtle or minimally displaced injuries in the wrist and ankle. False positives mainly came from chronic or degenerative changes. False negatives had a significant clinical impact, while false positives primarily increased resource utilisation. These results suggest AI is best as a triage and support tool under radiologist oversight. Future updates should focus on detecting subtle fractures more accurately, distinguishing between acute and chronic changes, and validating these findings in clinical workflows.

| 1. | Collin OLC, Tawa N, Opondo E, Ondieki J. Prevalence of Lower Limb Fractures among Adult Patients at Mama Lucy Kibaki Hospital. Int J Recent Innov Med Clin Res. 2024;3:20-27. [DOI] [Full Text] |

| 2. | Universitry Hospital Center Joseph Ravohanagy Andrianavalona Antananarivo Madagascar, Rohimpitiavana HA, Soanirina MR, Razaka IA, Hospital center Antsohihy Madagascar, Rabemazava AZLA, Solofomalala GD, Universitry Hospital Center Anosiala Antananarivo Madagascar, Razafimahandry HJC, Universitry Hospital Center Joseph Ravohanagy Andrianavalona Antananarivo Madagascar. Epidemio-clinical aspects of fractures of the long bones of the lower limbs at the University Hospital Joseph Ravoahangy Andrianavalona. Batna J Med Sci. 2023;10:6-11. [DOI] [Full Text] |

| 3. | Zhang J, Bradshaw F, Hussain I, Karamatzanis I, Duchniewicz M, Krkovic M. The Epidemiology of Lower Limb Fractures: A Major United Kingdom (UK) Trauma Centre Study. Cureus. 2024;16:e56581. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 6] [Reference Citation Analysis (0)] |

| 4. | Jaramillo D. Radiologists and Their Noise: Variability in Human Judgment, Fallibility, and Strategies to Improve Accuracy. Radiology. 2022;302:511-512. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 5] [Article Influence: 1.3] [Reference Citation Analysis (0)] |

| 5. | Bruno MA. 256 Shades of gray: uncertainty and diagnostic error in radiology. Diagnosis (Berl). 2017;4:149-157. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 17] [Cited by in RCA: 21] [Article Influence: 2.6] [Reference Citation Analysis (0)] |

| 6. | Uzor RB, Monu JUV, Pope TL. Long Bone Trauma: Radiographic Pitfalls. In: Peh W, editor. Pitfalls in Musculoskeletal Radiology. Cham: Springer, 2017. [DOI] [Full Text] |

| 7. | Bruno MA, Walker EA, Abujudeh HH. Understanding and Confronting Our Mistakes: The Epidemiology of Error in Radiology and Strategies for Error Reduction. Radiographics. 2015;35:1668-1676. [PubMed] [DOI] [Full Text] |

| 8. | Liawrungrueang W, Cholamjiak W, Promsri A, Jitpakdee K, Sunpaweravong S, Kotheeranurak V, Sarasombath P. Artificial Intelligence for Cervical Spine Fracture Detection: A Systematic Review of Diagnostic Performance and Clinical Potential. Global Spine J. 2025;15:2547-2558. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 11] [Reference Citation Analysis (0)] |

| 9. | Christoforides EJ, Mulroy D, Oswald S, Rust BD, Muralidhar R, Jacobs RJ. Artificial Intelligence vs. Physician Expertise in Appendicular Skeleton Fracture Detection: A Scoping Review. J Am Osteopath Acad Orthop. 2024;. [DOI] [Full Text] |

| 10. | Anderson PG, Baum GL, Keathley N, Sicular S, Venkatesh S, Sharma A, Daluiski A, Potter H, Hotchkiss R, Lindsey RV, Jones RM. Deep Learning Assistance Closes the Accuracy Gap in Fracture Detection Across Clinician Types. Clin Orthop Relat Res. 2023;481:580-588. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 7] [Cited by in RCA: 30] [Article Influence: 10.0] [Reference Citation Analysis (0)] |

| 11. | Nowroozi A, Salehi MA, Shobeiri P, Agahi S, Momtazmanesh S, Kaviani P, Kalra MK. Artificial intelligence diagnostic accuracy in fracture detection from plain radiographs and comparing it with clinicians: a systematic review and meta-analysis. Clin Radiol. 2024;79:579-588. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 28] [Article Influence: 14.0] [Reference Citation Analysis (0)] |

| 12. | Dell'Aria A, Tack D, Saddiki N, Makdoud S, Alexiou J, De Hemptinne FX, Berkenbaum I, Neugroschl C, Tacelli N. Radiographic Detection of Post-Traumatic Bone Fractures: Contribution of Artificial Intelligence Software to the Analysis of Senior and Junior Radiologists. J Belg Soc Radiol. 2024;108:44. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 11] [Reference Citation Analysis (0)] |

| 13. | Oeding JF, Kunze KN, Messer CJ, Pareek A, Fufa DT, Pulos N, Rhee PC. Diagnostic Performance of Artificial Intelligence for Detection of Scaphoid and Distal Radius Fractures: A Systematic Review. J Hand Surg Am. 2024;49:411-422. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 16] [Cited by in RCA: 22] [Article Influence: 11.0] [Reference Citation Analysis (0)] |

| 14. | Bachmann R, Gunes G, Hangaard S, Nexmann A, Lisouski P, Boesen M, Lundemann M, Baginski SG. Improving traumatic fracture detection on radiographs with artificial intelligence support: a multi-reader study. BJR Open. 2024;6:tzae011. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 14] [Reference Citation Analysis (0)] |

| 15. | Guermazi A, Tannoury C, Kompel AJ, Murakami AM, Ducarouge A, Gillibert A, Li X, Tournier A, Lahoud Y, Jarraya M, Lacave E, Rahimi H, Pourchot A, Parisien RL, Merritt AC, Comeau D, Regnard NE, Hayashi D. Improving Radiographic Fracture Recognition Performance and Efficiency Using Artificial Intelligence. Radiology. 2022;302:627-636. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 233] [Cited by in RCA: 190] [Article Influence: 47.5] [Reference Citation Analysis (0)] |

| 16. | Jung J, Dai J, Liu B, Wu Q. Artificial intelligence in fracture detection with different image modalities and data types: A systematic review and meta-analysis. PLOS Digit Health. 2024;3:e0000438. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 4] [Cited by in RCA: 35] [Article Influence: 17.5] [Reference Citation Analysis (0)] |

| 17. | Raisuddin AM, Vaattovaara E, Nevalainen M, Nikki M, Järvenpää E, Makkonen K, Pinola P, Palsio T, Niemensivu A, Tervonen O, Tiulpin A. Critical evaluation of deep neural networks for wrist fracture detection. Sci Rep. 2021;11:6006. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 28] [Cited by in RCA: 46] [Article Influence: 9.2] [Reference Citation Analysis (0)] |

| 18. | Husarek J, Hess S, Razaeian S, Ruder TD, Sehmisch S, Müller M, Liodakis E. Artificial intelligence in commercial fracture detection products: a systematic review and meta-analysis of diagnostic test accuracy. Sci Rep. 2024;14:23053. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 36] [Reference Citation Analysis (0)] |

| 19. | Kuo RYL, Harrison C, Curran TA, Jones B, Freethy A, Cussons D, Stewart M, Collins GS, Furniss D. Artificial Intelligence in Fracture Detection: A Systematic Review and Meta-Analysis. Radiology. 2022;304:50-62. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 284] [Cited by in RCA: 213] [Article Influence: 53.3] [Reference Citation Analysis (0)] |

| 20. | Kraus M, Anteby R, Konen E, Eshed I, Klang E. Artificial intelligence for X-ray scaphoid fracture detection: a systematic review and diagnostic test accuracy meta-analysis. Eur Radiol. 2024;34:4341-4351. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 10] [Cited by in RCA: 20] [Article Influence: 10.0] [Reference Citation Analysis (0)] |

| 21. | Liu Y, Liu W, Chen H, Xie S, Wang C, Liang T, Yu Y, Liu X. Artificial intelligence versus radiologist in the accuracy of fracture detection based on computed tomography images: a multi-dimensional, multi-region analysis. Quant Imaging Med Surg. 2023;13:6424-6433. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 13] [Reference Citation Analysis (0)] |

| 22. | J O, S L, S G, B H, S M N. An overview of the performance of AI in fracture detection in lumbar and thoracic spine radiographs on a per vertebra basis. Skeletal Radiol. 2024;53:1563-1571. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 5] [Reference Citation Analysis (0)] |

| 23. | Rainey C, Mcconnell J, Hughes C, Bond R, Mcfadden S. Artificial intelligence for diagnosis of fractures on plain radiographs: A scoping review of current literature. Intell Based Med. 2021;5:100033. [DOI] [Full Text] |

| 24. | Link TM, Pedoia V. Using AI to Improve Radiographic Fracture Detection. Radiology. 2022;302:637-638. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 13] [Reference Citation Analysis (0)] |

| 25. | Lavanya MR, Praviraj M, Anusha V, Teja VS, Suresh P. Bone Fracture Detection System. Int J Sci Res Eng Manage. 2025;09:1-9. [DOI] [Full Text] |

| 26. | Pauling C, Kanber B, Arthurs OJ, Shelmerdine SC. Commercially available artificial intelligence tools for fracture detection: the evidence. BJR Open. 2024;6:tzad005. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 23] [Reference Citation Analysis (0)] |

| 27. | Canoni-Meynet L, Verdot P, Danner A, Calame P, Aubry S. Added value of an artificial intelligence solution for fracture detection in the radiologist's daily trauma emergencies workflow. Diagn Interv Imaging. 2022;103:594-600. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 2] [Cited by in RCA: 51] [Article Influence: 12.8] [Reference Citation Analysis (0)] |

| 28. | Novak A, Hollowday M, Espinosa Morgado AT, Oke J, Shelmerdine S, Woznitza N, Metcalfe D, Costa ML, Wilson S, Kiam JS, Vaz J, Limphaibool N, Ventre J, Jones D, Greenhalgh L, Gleeson F, Welch N, Mistry A, Devic N, Teh J, Ather S. Evaluating the impact of artificial intelligence-assisted image analysis on the diagnostic accuracy of front-line clinicians in detecting fractures on plain X-rays (FRACT-AI): protocol for a prospective observational study. BMJ Open. 2024;14:e086061. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 6] [Reference Citation Analysis (0)] |

| 29. | Hsiao SK, Treat RM, Javan R. Establishing a Multi-Society Generative AI Task Force Within Radiology. Cureus. 2024;16:e64475. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 2] [Reference Citation Analysis (0)] |