Published online Apr 19, 2026. doi: 10.5498/wjp.v16.i4.116428

Revised: December 7, 2025

Accepted: January 14, 2026

Published online: April 19, 2026

Processing time: 139 Days and 6 Hours

Adolescent depression is a pressing global public health challenge. Current screening largely depends on self-reported questionnaires, which are vulnerable to response biases and underreporting. Integrating objective behavioral signals with validated scales may bridge this subjective-objective gap and improve de

To develop a novel multimodal protocol integrating video-recorded facial ex

A total of 771 adolescents (aged 12-18 years, mean 15.23 ± 1.68) were recruited. Facial expressions, reading-aloud voices, and CSSSDS scale data were collected from all participants. Five machine learning algorithms [extreme gradient bo

Statistical analysis confirmed XGBoost as the preferred algorithm in both multimodal and bimodal protocols, showing statistically significant superiority (P < 0.05) across several key metrics (multimodal recall and F1 score; bimodal AUC-ROC, AUC-PR, and F1 score). In stark contrast, the artificial neural network exhibited high volatility and low precision despite achieving perfect recall in both protocols (all P < 0.001). Statistical comparisons further confirmed the superiority of the multimodal XGBoost over its bimodal counterpart, demonstrating higher AUC-ROC (t = 4.52, P < 0.001) and AUC-PR (t = 3.87, P < 0.001), both with large effect sizes (Cohen’s d > 1.0). The multimodal model also demonstrated significantly greater stability in core discriminative metrics (AUC-ROC, AUC-PR, and recall; all P < 0.05).

The XGBoost-driven multimodal model demonstrated superior discriminative power, greater stability, and a balanced precision-recall profile compared with bimodal models and other algorithms. Nevertheless, limitations related to sample size, use of a regionspecific scale, and task-driven data collection mean that further validation in larger, more diverse, and ecologically valid settings is warranted.

Core Tip: Current screening for adolescent depression relies heavily on subjective questionnaires. Therefore, we developed a multimodal protocol combining the Chinese Secondary School Students Depression Scale with objective facial and vocal data to improve detection. Our analysis showed that extreme gradient boosting outperformed other machine learning models under multimodal and bimodal settings, achieving superior performance across multiple metrics. Statistical comparisons confirmed that the multimodal extreme gradient boosting model significantly surpassed its bimodal counterpart, de

- Citation: Zeng Y, Yang J, Kuang L. Bridging the gap between subjective and objective measures: A multimodal protocol for adolescent depression detection. World J Psychiatry 2026; 16(4): 116428

- URL: https://www.wjgnet.com/2220-3206/full/v16/i4/116428.htm

- DOI: https://dx.doi.org/10.5498/wjp.v16.i4.116428

Mental disorders rank among the top ten global disease burdens with depression standing out as exceptionally prevalent[1]. Depression is characterized by persistent sadness, loss of interest, and in severe cases suicidal thoughts, all of which substantially impair quality of life[2]. Over the recent decades adolescent depression rates have risen notably worldwide[3]. In China the pooled prevalence of depressive symptoms among adolescents reached 24.6% in 2020[4] while self-reported severe depressive symptoms affected 7.4% of secondary school students in certain regions[5]. Similarly, in the United States the prevalence of past-year major depressive episodes in adolescents climbed from 8.1% in 2009 to 15.8% in 2019 with a particularly sharp rise among females (from 11.4% to 23.4%)[6]. Early identification is therefore critical as untreated depression in adolescence is associated with poor academic performance, substance abuse, elevated suicide risk, and increased burdens on families and society[7-9].

Nevertheless, diagnosing adolescent depression remains challenging. Adolescents often underreport symptoms due to stigma, and clinicians struggle to distinguish depression from normal mood fluctuations[10]. Conventional tools, such as the Patient Health Questionnaire-9 or structured interviews, focus mainly on symptom frequency, often overlooking contextual and behavioral dynamics[11,12]. This limitation has spurred interest in computational methods that use multimodal data to detect subtle, crossmodal depression markers.

Multimodal emotion recognition is an advanced interdisciplinary field that integrates speech, facial expressions, text, physiological signals (e.g., electroencephalography and heart rate), and gestures to infer human emotional states[13,14]. Although multimodal emotion recognition has shown efficacy in adults, adolescentspecific research remains scarce[15,16]. Adolescents exhibit heterogeneous depressive symptoms, social behaviors, and emotion-regulation patterns, calling for tailored models, multimodal integration, ethically curated datasets, and refined evaluation approaches[17-19].

To address these needs this study developed a multimodal protocol that combined objective behavioral indicators (videorecorded facial expressions and speech from standardized reading tasks) with the Adolescent Depression Scale. This integration aimed to mitigate biases in selfreporting and enhance diagnostic accuracy for adolescents in Mainland China.

Ethics approval was obtained from the Ethics Committee of the Second Affiliated Hospital of Chongqing Medical University (approval No. 2023-6). Participants were recruited through the hospital’s outpatient department and local secondary schools in Chongqing. Eligible participants first completed video recordings, text-based reading tasks, and standardized scale assessments. They then underwent a structured clinical interview for Diagnostic and Statistical Manual of Mental Disorders, conducted jointly by two licensed psychiatrists (each with > 5 years of clinical experience) to confirm diagnoses and annotate the data. In cases of diagnostic disagreement, a third senior psychiatric expert (holding a senior professional title with > 15 years of clinical experience) served as an arbitrator to reach a conclusive assessment. Upon completing all study components, each participant received a professional psychological evaluation report with personalized recommendations.

Inclusion criteria: (1) Aged 12-18 years; (2) Proficiency in spoken Mandarin; (3) Enrollment in a secondary school in Mainland China; and (4) Signed informed consent from both legal guardians and participant.

Exclusion criteria: (1) Age < 12 or > 18 years; (2) Lack of signed informed consent; (3) Incomplete video, text, or scale data or failure to extract standardized data; (4) Incomplete interviews by the research team; and (5) Other circumstances deemed unsuitable for study participation.

From previously established video and text repositories, we preselected 15 video clips (60-90 s each) and 16 text passages (237 ± 50 Chinese characters). An expert panel comprising two psychologists and three secondaryschool teachers scored each clip on five equally weighted dimensions (20% each): (1) Cultural appropriateness (free from regional or dialect barriers); (2) Age suitability (targeted at 12-year-olds to 18-year-olds); (3) Ethical safety (aligned with study ethics requirements); (4) Clarity of humor (understandable without background knowledge); and (5) Technical feasibility (facial occlusion < 15%). The two highest-ranked video clips (66 s and 80 s) and four text passages (114 characters, 256 characters, 274 characters, and 297 characters) were retained.

Before data collection, participants received a full procedural briefing, including the option to withdraw at any time using a preassigned “emotional safe word”. Recording conditions were standardized: Uniform lighting (500 ± 50 Lx); ambient noise < 40 dB; and video captured at 1080 p, 30 fps.

Before facial expression recording participants were positioned facing the camera with complete visibility, no obstructions, and facial coverage exceeding 60% of the frame. A custom application prompted participants to grant camera and microphone access; data collection began only after consent was confirmed. Participants were then guided to capture a 5-s static neutral expression with their lips closed. During recording automatic pausing was triggered by head occlusion (e.g., by hands, hair, or objects), departure from the camera’s field of view, or excessive head rotation that impaired facial visibility. A prompt (“Please ensure your entire face remains visible within the camera frame”) was displayed until compliance was restored after which recording resumed. Before the text-reading task, participants read three digits aloud to check the audio environment. Successful validation unlocked the subsequent text passages with the character count displayed on-screen.

Depressive symptoms were assessed using the Chinese Secondary School Students Depression Scale (CSSSDS; Wang JS), a 20-item, 5-point instrument with excellent internal consistency (Cronbach’s alpha = 0.944)[20]. The total score was computed as the mean of all items; a cutoff score of 2 or higher indicates clinically significant depressive symptomatology.

Video, audio, and CSSSDS scores were compiled for all participants. Those who did not complete the protocol or provided data unsuitable for feature extraction were excluded. A multimodal database was then built from the eligible samples. Per the study design, this database was randomly split into a training and validation dataset at a 70:30 ratio for subsequent model development and evaluation.

Two analytical frameworks were established: A multimodal protocol incorporating vocal characteristics, facial ex

Analyses were conducted using SPSS 28.0 software and Python (version 3.13.0; https://www.python.org/). Continuous variables were presented as mean ± SD and categorical variables as n (%). Continuous variables were compared using the independent-samples t test and categorical variables using Pearson’s χ2 test. Univariable logistic regression examined associations between demographic factors and depression diagnosis with results expressed as odds ratios (ORs) and 95% confidence intervals (CIs). Cross-fold means and SDs of performance metrics were calculated. Independent samples Welch’s t tests compared means between protocols with Cohen’s d quantifying effect size. Levene’s test assessed variance homogeneity across folds. Statistical significance was set at P < 0.05.

The sample included 771 participants aged 12-18 years (mean 15.23 ± 1.68). Sex ratios (male/female) were 126/417 in the depression group and 119/109 in the non-depression group. Educational status (junior high/high school) was 242/301 and 148/80 in the two groups, respectively. Univariable analysis showed that both gender and education level were significantly associated with depression. Females had a 3.61 times higher odds of depression than males (OR = 3.61, 95%CI: 2.65-4.93), and senior high school students had a 2.30 times higher odds than junior high school students (OR = 2.30, 95%CI: 1.67-3.17) (Table 1).

| Depression group | Non-depression group | P value | OR (95%CI) | |

| Number | 543 | 228 | ||

| Age (years), mean ± SD | 15.20 ± 1.69 | 15.30 ± 1.67 | 0.460 | |

| Gender | ||||

| Male | 126 (23.20) | 119 (52.19) | < 0.001 | 3.61 (2.60-5.01) |

| Female | 417 (76.80) | 109 (47.81) | ||

| Education | ||||

| Junior high school | 242 (44.57) | 148 (64.91) | < 0.001 | 2.30 (1.67-3.17) |

| High school | 301 (55.43) | 80 (35.09) |

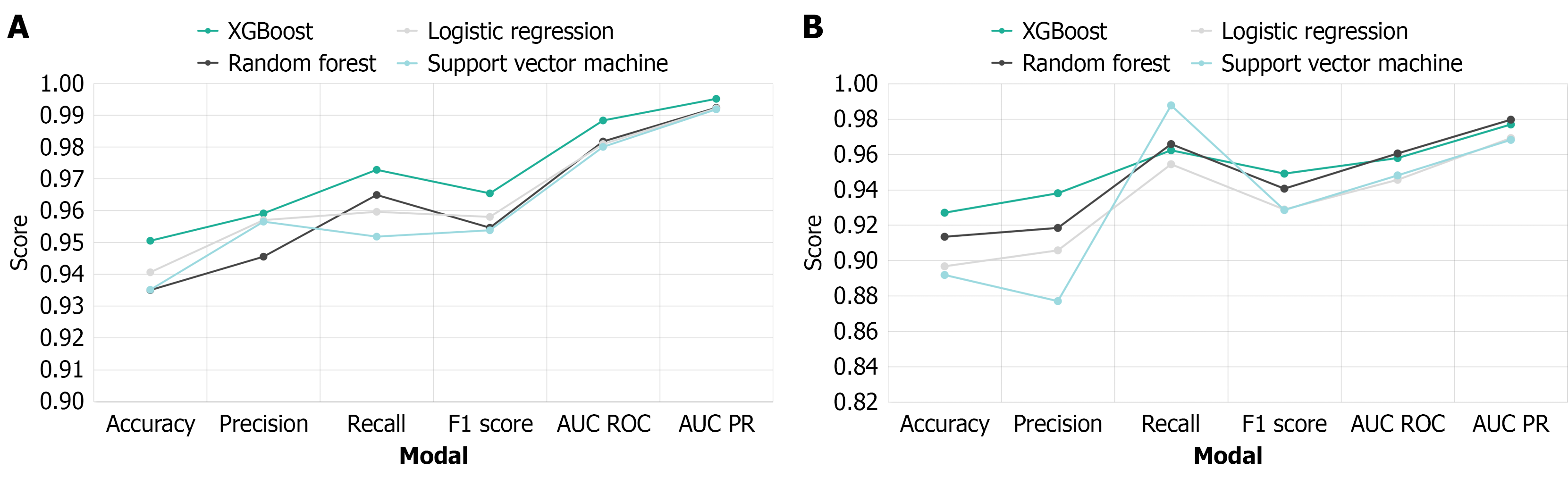

Table 2 summarizes performance under the multimodal protocol. XGBoost achieved the best results: 95.05% accuracy; 95.91% precision; 97.28% recall; 96.54% F1 score; and 98.83% AUC-ROC (Figure 1A). In contrast, ANN achieved perfect recall but yielded the lowest precision (73.33%, P < 0.001; Table 2). Table 3 summarizes model performance under the bimodal protocol. XGBoost performed best overall: 92.70% accuracy; 93.80% precision; 96.23% recall; 94.91% F1 score; and 95.79% AUC-ROC (Figure 1B). Although ANN achieved perfect recall (100%), it showed the lowest precision (70.50%, P < 0.001; Table 3).

| Accuracy | Precision | Recall | F1 score | AUC-ROC | AUC-PR | |

| XGBoost | 0.95 ± 0.03 | 0.96 ± 0.03 | 0.97 ± 0.02a | 0.97 ± 0.02a | 0.99 ± 0.01 | 1.00 ± 0.00 |

| Random forest | 0.94 ± 0.03 | 0.95 ± 0.03 | 0.96 ± 0.03 | 0.95 ± 0.02 | 0.98 ± 0.01 | 0.99 ± 0.01 |

| Logistic regression | 0.94 ± 0.03 | 0.96 ± 0.03 | 0.96 ± 0.03 | 0.96 ± 0.02 | 0.98 ± 0.01 | 0.99 ± 0.01 |

| Support vector machine | 0.94 ± 0.03 | 0.96 ± 0.03 | 0.95 ± 0.03 | 0.95 ± 0.02 | 0.98 ± 0.01 | 0.99 ± 0.01 |

| Artificial neural networks | 0.74 ± 0.03c | 0.73 ± 0.02c | 1.00 ± 0.00c | 0.85 ± 0.01c | 0.78 ± 0.08c | 0.85 ± 0.06c |

| Accuracy | Precision | Recall | F1 score | AUC-ROC | AUC-PR | |

| XGBoost | 0.93 ± 0.03c | 0.94 ± 0.04c | 0.96 ± 0.03 | 0.95 ± 0.02a | 0.96 ± 0.04a | 0.98 ± 0.03a |

| Random forest | 0.91 ± 0.04 | 0.92 ± 0.05 | 0.97 ± 0.03 | 0.94 ± 0.03 | 0.96 ± 0.03 | 0.98 ± 0.02 |

| Logistic regression | 0.90 ± 0.04 | 0.91 ± 0.04 | 0.95 ± 0.04 | 0.93 ± 0.03 | 0.95 ± 0.03 | 0.97 ± 0.02 |

| Support vector machine | 0.89 ± 0.04 | 0.88 ± 0.04 | 0.99 ± 0.01 | 0.93 ± 0.02 | 0.95 ± 0.04 | 0.97 ± 0.03 |

| Artificial neural networks | 0.71 ± 0.00c | 0.71 ± 0.04c | 1.00 ± 0.00c | 0.83 ± 0.00c | 0.83 ± 0.06c | 0.92 ± 0.03c |

A 10-fold cross-validation process was repeated three times to ensure robust performance. Results confirmed the superior robustness of XGBoost under the multimodal protocol. Its AUC-ROC showed a notably low SD (0.0089) that was smaller than that of all comparator models, indicating stable generalization across data subsets (Table 2). In bimodal settings cross-validation further supported the consistent performance of XGBoost. Although reduced data dimensionality from multimodal to bimodal inputs led to slightly greater performance volatility across all models, XGBoost still exhibited the lowest variance among the bimodal methods (Table 3).

Across cross-validation analyses ANN showed substantially higher volatility in both multimodal and bimodal settings. In the multimodal condition its AUC-ROC and AUC-PR SDs (0.0754 and 0.0591, respectively) were multiples of those of other models. This pronounced variability suggests that the ANN is critically sensitive to the specific partitioning of the training data, reflecting compromised generalizability and greater instability (Table 2). Although the performance gap narrowed in bimodal experiments, ANN remained the least stable model among all methods evaluated (Table 3).

Independent-samples Welch’s t tests revealed consistent and significant performance advantages of the multimodal model over the bimodal model across nearly all metrics (Table 4). The multimodal model demonstrated substantially higher discriminative ability with significantly greater AUC-ROC [t = 4.52, P < 0.001, Cohen’s d (hereafter denoted as d) = 1.17] and AUC-PR (t = 3.87, P < .001, d = 1.04), both showing large effect sizes. Significant improvements with moderate to large effect sizes were also observed in accuracy (t = 2.95, P = 0.005, d = 0.76) and F1 score (t = 2.95, P = 0.005, d = 0.74). Although the multimodal model achieved higher precision (t = 2.18, P = 0.034, d = 0.56), it maintained recall comparable to that of the bimodal model (t = 1.51, P = 0.137, d = 0.39), indicating no loss of detection sensitivity. This balanced improvement in both precision and recall underscored the ability of the multimodal model to reduce false positives without compromising true positive identification, yielding more robust and effective classification performance.

| Multimodal | Bimodal | t value | P value | Cohen’s d | Effect size | |

| AUC-ROC | 0.99 ± 0.01 | 0.96 ± 0.04 | 4.52 | 0.00 | 1.17 | Large |

| AUC-PR | 0.99 ± 0.00 | 0.98 ± 0.03 | 3.87 | 0.00 | 1.04 | Large |

| Accuracy | 0.95 ± 0.03 | 0.93 ± 0.03 | 2.95 | 0.01 | 0.76 | Moderate-to-large |

| Precision | 0.96 ± 0.03 | 0.94 ± 0.04 | 2.18 | 0.03 | 0.56 | Moderate |

| Recall | 0.97 ± 0.02 | 0.96 ± 0.03 | 1.51 | 0.14 | 0.39 | Small-to-moderate |

| F1 score | 0.97 ± 0.02 | 0.95 ± 0.02 | 2.95 | 0.01 | 0.74 | Moderate-to-large |

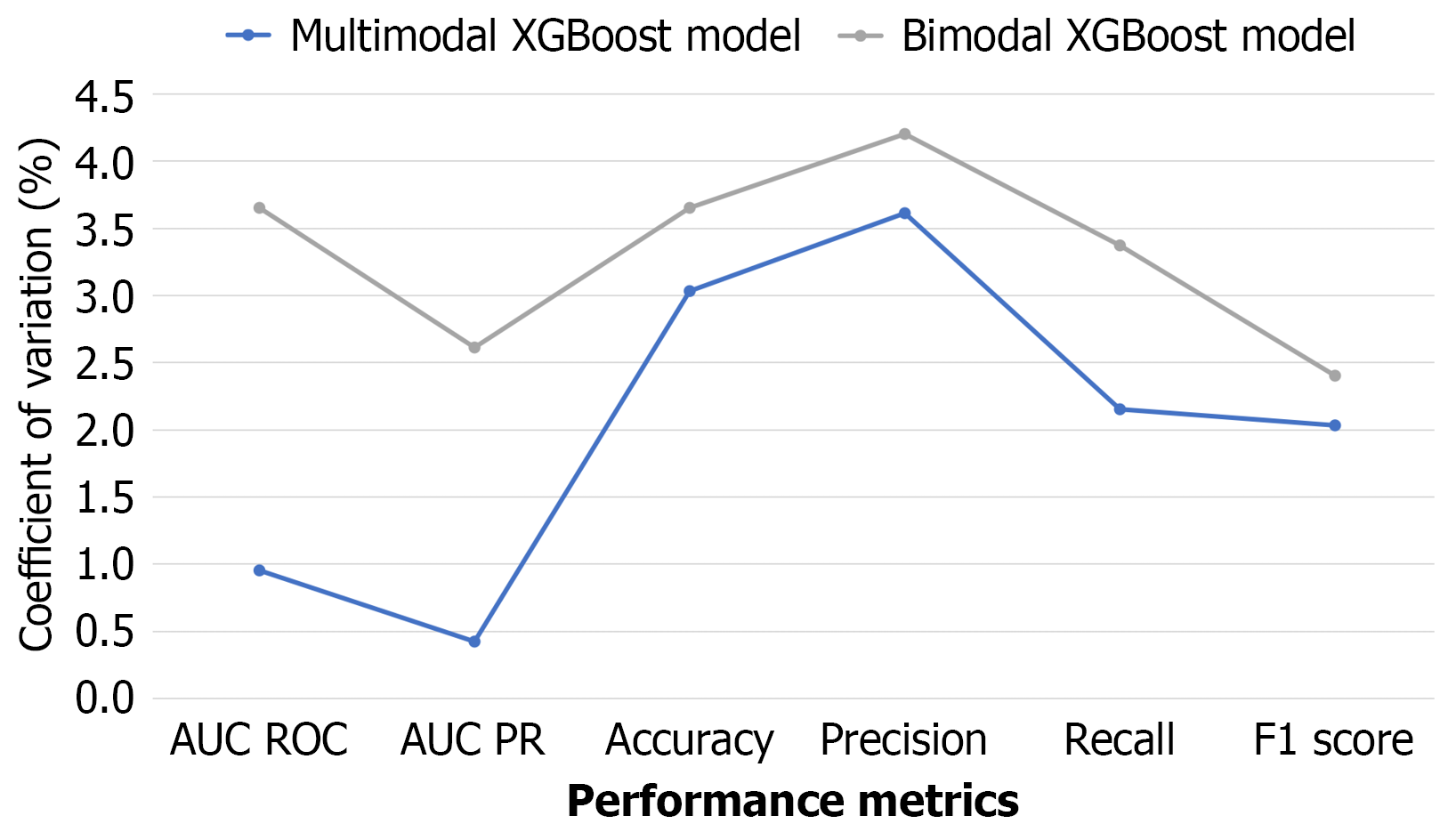

Levene’s test showed that the multimodal model had significantly lower variance (greater stability) on three key metrics: AUC-ROC (P < 0.001); AUC-PR (P < 0.001); and recall (P = 0.036). For accuracy, precision, and F1 score, no statistically significant difference in stability was found (all P > 0.05) (Table 5). These findings were further supported by coefficients of variation: The multimodal model consistently produced lower coefficients of variation values across all performance metrics than the bimodal model, indicating more consistent and reliable outputs (Figure 2).

| Levene’s test P value | Interpretation | |

| AUC-ROC | 0.00 | Significant difference |

| AUC-PR | 0.00 | Significant difference |

| Accuracy | 0.39 | No significant difference |

| Precision | 0.55 | No significant difference |

| Recall | 0.04 | Significant difference |

| F1 score | 0.49 | No significant difference |

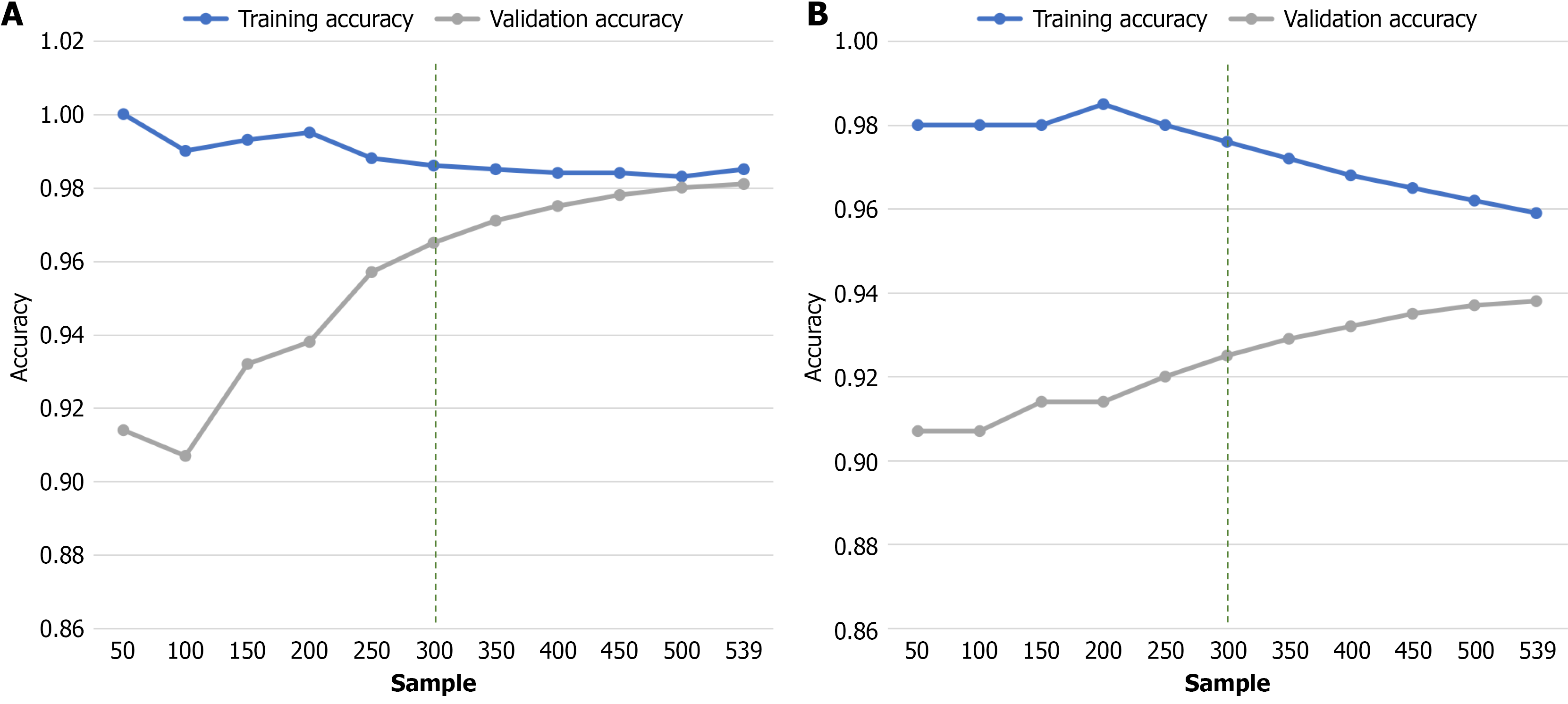

Learning curve analysis revealed that validation performance plateaued at approximately 300 training samples under both protocols (Figure 3). Under the multimodal protocol validation accuracy increased sharply up to 250 samples, then rose only marginally from 0.965 (300 samples) to 0.981 (539 samples) with a per-100-sample gain of < 0.007 (Figure 3A). A similar pattern was observed under the bimodal protocol. Accuracy arrived at 0.925 after 300 samples and improved by < 0.006 per 100 samples thereafter (Figure 3B). In both cases the incremental benefit beyond 300 cases was minimal, suggesting that the current dataset is sufficient for stable training.

Both protocols demonstrated robust continuous learning with validation accuracy steadily improving as the sample size increased. The multimodal protocol also demonstrated superior generalization and greater resistance to overfitting. The gap between its training and validation accuracy narrowed rapidly from 0.086 at 50 samples to 0.004 at 539 samples (Figure 3A). This convergence indicates excellent generalization. In contrast, although the bimodal protocol did not overfit severely, its declining training accuracy and stagnant validation performance suggest limited capacity to extract complex patterns from additional data.

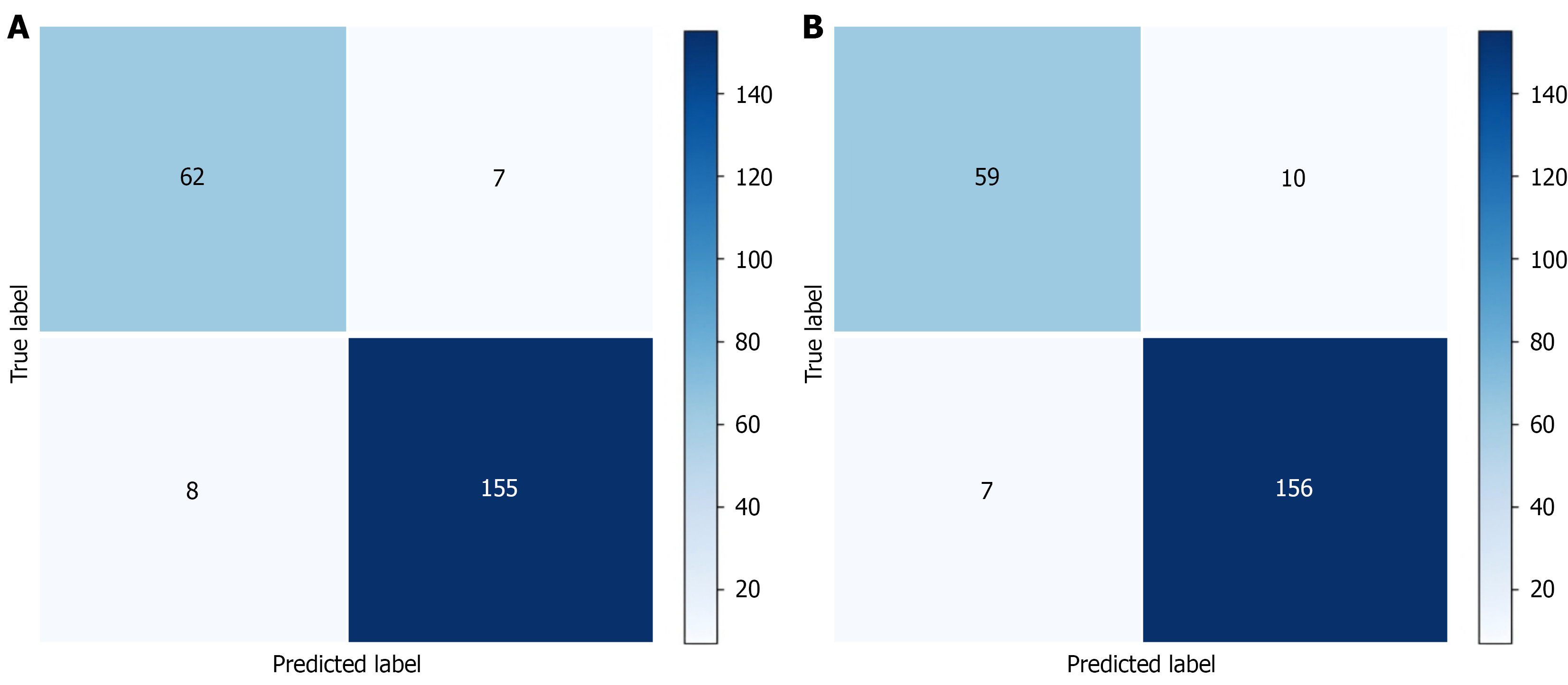

Each row of the confusion matrix corresponded to a predicted category and each column to a true label (Figure 4). A descriptive analysis revealed a consistent performance advantage for the multimodal protocol. For the detection of adolescent depression, the multimodal model achieved a higher correct classification rate (89.9%) than the bimodal model (85.5%). This improvement reduced total misclassifications from 15 (multimodal) to 17 (bimodal). This pattern suggests that modality integration may enhance classification robustness although the descriptive difference requires further statistical validation with larger samples.

The complexity and heterogeneity of adolescent depressive symptoms pose significant challenges for emotion recognition[21]. Prior work indicated that multimodal markers, such as speech prosody, facial microexpressions, and social media language patterns, may reveal latent depressive states more reliably than unimodal analyses[22,23]. Building on this foundation, we developed and validated a novel multimodal protocol that integrates objective behavioral markers (facial expressions and vocal characteristics) with CSSSDS. Our findings robustly demonstrated the superior performance of the multimodal protocol, particularly when driven by the XGBoost algorithm, highlighting the diagnostic value of combining digital behavioral phenotyping with traditional psychometric scales. This approach offers an alternative to the current reliance on subjective reports in adolescent depression screening.

Our study provided a definitive answer to a key methodological question: Whether adding standardized scales improves models that rely solely on vocal prosody and facial microexpressions. The multimodal protocol significantly outperformed the bimodal protocol on key metrics (accuracy, precision, F1 score, and AUC-ROC) while also reducing variance. It therefore showed that including CSSSDS scores enhanced discriminative power without compromising sensitivity, yielding a more robust and comprehensive assessment system. Levene’s test confirmed greater stability for the multimodal protocol: Variances in AUC-ROC, AUC-PR, and recall were significantly lower while no significant differences were observed for accuracy, precision, or F1 score. This stability disparity suggests that integrating the self-report scale not only boosts discriminatory power but also buffers the model against performance fluctuations in core functions. The notable reduction in the first three metrics demonstrated that augmenting the input data with vocal prosody, facial microexpressions, and the CSSSDS scale substantially reduced model instability in core discriminative tasks. In particular, the lower recall variance indicated that the multimodal pipeline was less sensitive to data partitioning at the critical decision point of true positive identification, thereby substantially reducing the risk of missed cases[24]. In real-world campus or clinical batch-screening programs, a low-variance, high-recall approach supports more stable referral decisions and predictable resource planning.

The consistent advantage of the multimodal protocol can be attributed to the complementary nature of its data streams[25]. Because adolescent depressive symptoms are complex and heterogeneous, no single expressive channel is universally reliable. As noted in prior studies, vocal prosody and facial microexpressions act as nonconscious behavioral indicators of latent depression that may be underreported or missed in subjective self-disclosure[26]. The CSSSDS, developed and validated for Chinese adolescents, offers a culturally appropriate screening framework while objective digital phenotyping provides a window into behavioral dynamics. Together they create a more holistic and resilient assessment profile that is less vulnerable to the underreporting bias common in this population. Therefore, our multimodal protocol holds substantial promise for real-world depression screening and comprehensive diagnosis among Chinese adolescents. It offers a pathway to address a key limitation of current screening methods that rely predominantly on subjective self-report scales. Our findings also identified female gender (OR = 3.61, 95%CI: 2.60-5.01) and senior high school stage (OR = 2.30, 95%CI: 1.67-3.17) as predictors of depression risk, consistent with earlier reports[27]. These results underscore the need for enhanced screening in these subgroups and for tools that capture subtle, context-dependent depressive cues beyond conventional questionnaires.

This study compared the classification performance of a multimodal protocol (integrating vocal features, facial expressions, and CSSSDS scores) with a bimodal protocol (using only vocal and facial data) across five machine learning algorithms. XGBoost consistently outperformed all other models under both protocols. Notably, the multimodal XGBoost achieved a higher AUC-ROC with remarkably low SD in the 10-fold cross-validation, reflecting superior generalizability and stability. In contrast, ANN achieved perfect recall but showed low precision under both protocols, indicating a high false positive rate and limited generalizability.

The strong performance of XGBoost can be explained by several methodological advantages that are well-suited to our multimodal dataset. First, its tree-based gradient-boosting framework naturally handles heterogeneous inputs (con

Several limitations should be acknowledged. First, participants were recruited from one geographic region in China, and the CSSSDS scale was developed specifically for Chinese secondary school students; these factors may limit generalizability to other populations. Future work should include external validation in more diverse cohorts and scales adapted for different cultures to establish broader applicability. Second, vocal and facial data were collected under standardized tasks; naturalistic speech and spontaneous expressions in daily life may provide additional diagnostic cues. Although incorporating ecological momentary assessment could enhance the protocol, such methods require stringent ethical and privacy safeguards. Third, although learning-curve analysis indicates that the current dataset is sufficient for stable XGBoost training, further optimization of multimodal fusion strategies and deep-learning architectures may benefit from larger, multi-center corpora[31,32].

This study bridged the gap between subjective and objective measures in detecting adolescent depression by introducing a multimodal protocol that combines facial expressions, vocal characteristics, and a self-report scale. The XGBoost-driven multimodal model demonstrated high discriminatory power, exceptional stability, and a balanced precision-recall profile, supporting its potential for real-world deployment. However, due to the regional sample, culturally specific scale, and task-based data collection, further validation in larger, more diverse, and ecologically valid settings remains necessary.

| 1. | GBD 2019 Mental Disorders Collaborators. Global, regional, and national burden of 12 mental disorders in 204 countries and territories, 1990-2019: a systematic analysis for the Global Burden of Disease Study 2019. Lancet Psychiatry. 2022;9:137-150. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 5012] [Cited by in RCA: 3880] [Article Influence: 970.0] [Reference Citation Analysis (1)] |

| 2. | McCarron RM, Shapiro B, Rawles J, Luo J. Depression. Ann Intern Med. 2021;174:ITC65-ITC80. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 429] [Cited by in RCA: 364] [Article Influence: 72.8] [Reference Citation Analysis (1)] |

| 3. | Shorey S, Ng ED, Wong CHJ. Global prevalence of depression and elevated depressive symptoms among adolescents: A systematic review and meta-analysis. Br J Clin Psychol. 2022;61:287-305. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1170] [Cited by in RCA: 880] [Article Influence: 220.0] [Reference Citation Analysis (1)] |

| 4. | Tang X, Tang S, Ren Z, Wong DFK. Prevalence of depressive symptoms among adolescents in secondary school in mainland China: A systematic review and meta-analysis. J Affect Disord. 2019;245:498-507. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 264] [Cited by in RCA: 185] [Article Influence: 26.4] [Reference Citation Analysis (0)] |

| 5. | Leung DYP, Leung SF, Zhang XL, Ruan JY, Yeung WF, Mak YW. Factors associated with severe depressive symptoms among Chinese secondary school students in Hong Kong: a large cross-sectional survey. Front Public Health. 2023;11:1148528. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 5] [Cited by in RCA: 3] [Article Influence: 1.0] [Reference Citation Analysis (0)] |

| 6. | Daly M. Prevalence of Depression Among Adolescents in the U.S. From 2009 to 2019: Analysis of Trends by Sex, Race/Ethnicity, and Income. J Adolesc Health. 2022;70:496-499. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 311] [Cited by in RCA: 240] [Article Influence: 60.0] [Reference Citation Analysis (1)] |

| 7. | Kalin NH. Anxiety, Depression, and Suicide in Youth. Am J Psychiatry. 2021;178:275-279. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 96] [Cited by in RCA: 67] [Article Influence: 13.4] [Reference Citation Analysis (0)] |

| 8. | Kim H. The Mediating Effect of Depression on the Relationship between Loneliness and Substance Use in Korean Adolescents. Behav Sci (Basel). 2024;14:241. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 8] [Cited by in RCA: 5] [Article Influence: 2.5] [Reference Citation Analysis (0)] |

| 9. | Lu B, Lin L, Su X. Global burden of depression or depressive symptoms in children and adolescents: A systematic review and meta-analysis. J Affect Disord. 2024;354:553-562. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 175] [Cited by in RCA: 136] [Article Influence: 68.0] [Reference Citation Analysis (1)] |

| 10. | Adibi P, Kalani S, Zahabi SJ, Asadi H, Bakhtiar M, Heidarpour MR, Roohafza H, Shahoon H, Amouzadeh M. Emotion recognition support system: Where physicians and psychiatrists meet linguists and data engineers. World J Psychiatry. 2023;13:1-14. [PubMed] [DOI] [Full Text] |

| 11. | Robinson J, Khan N, Fusco L, Malpass A, Lewis G, Dowrick C. Why are there discrepancies between depressed patients’ Global Rating of Change and scores on the Patient Health Questionnaire depression module? A qualitative study of primary care in England. BMJ Open. 2017;7:e014519. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 32] [Cited by in RCA: 26] [Article Influence: 2.9] [Reference Citation Analysis (0)] |

| 12. | Levis B, Sun Y, He C, Wu Y, Krishnan A, Bhandari PM, Neupane D, Imran M, Brehaut E, Negeri Z, Fischer FH, Benedetti A, Thombs BD; Depression Screening Data (DEPRESSD) PHQ Collaboration, Che L, Levis A, Riehm K, Saadat N, Azar M, Rice D, Boruff J, Kloda L, Cuijpers P, Gilbody S, Ioannidis J, McMillan D, Patten S, Shrier I, Ziegelstein R, Moore A, Akena D, Amtmann D, Arroll B, Ayalon L, Baradaran H, Beraldi A, Bernstein C, Bhana A, Bombardier C, Buji RI, Butterworth P, Carter G, Chagas M, Chan J, Chan LF, Chibanda D, Cholera R, Clover K, Conway A, Conwell Y, Daray F, de Man-van Ginkel J, Delgadillo J, Diez-Quevedo C, Fann J, Field S, Fisher J, Fung D, Garman E, Gelaye B, Gholizadeh L, Gibson L, Goodyear-Smith F, Green E, Greeno C, Hall B, Hampel P, Hantsoo L, Haroz E, Harter M, Hegerl U, Hides L, Hobfoll S, Honikman S, Hudson M, Hyphantis T, Inagaki M, Ismail K, Jeon HJ, Jetté N, Khamseh M, Kiely K, Kohler S, Kohrt B, Kwan Y, Lamers F, Asunción Lara M, Levin-Aspenson H, Lino V, Liu SI, Lotrakul M, Loureiro S, Löwe B, Luitel N, Lund C, Marrie RA, Marsh L, Marx B, McGuire A, Mohd Sidik S, Munhoz T, Muramatsu K, Nakku J, Navarrete L, Osório F, Patel V, Pence B, Persoons P, Petersen I, Picardi A, Pugh S, Quinn T, Rancans E, Rathod S, Reuter K, Roch S, Rooney A, Rowe H, Santos I, Schram M, Shaaban J, Shinn E, Sidebottom A, Simning A, Spangenberg L, Stafford L, Sung S, Suzuki K, Swartz R, Tan PLL, Taylor-Rowan M, Tran T, Turner A, van der Feltz-Cornelis C, van Heyningen T, van Weert H, Wagner L, Li Wang J, White J, Winkley K, Wynter K, Yamada M, Zhi Zeng Q, Zhang Y. Accuracy of the PHQ-2 Alone and in Combination With the PHQ-9 for Screening to Detect Major Depression: Systematic Review and Meta-analysis. JAMA. 2020;323:2290-2300. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 491] [Cited by in RCA: 420] [Article Influence: 70.0] [Reference Citation Analysis (1)] |

| 13. | Li J, Peng J. End-to-End Multimodal Emotion Recognition Based on Facial Expressions and Remote Photoplethysmography Signals. IEEE J Biomed Health Inform. 2024;28:6054-6063. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 16] [Reference Citation Analysis (0)] |

| 14. | Wu M, Teng W, Fan C, Pei S, Li P, Pei G, Li T, Liang W, Lv Z. Multimodal Emotion Recognition Based on EEG and EOG Signals Evoked by the Video-Odor Stimuli. IEEE Trans Neural Syst Rehabil Eng. 2024;32:3496-3505. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 12] [Cited by in RCA: 2] [Article Influence: 1.0] [Reference Citation Analysis (0)] |

| 15. | Wang S, Qu JZ, Zhang Y, Zhang YD. Multimodal Emotion Recognition from EEG Signals and Facial Expressions. IEEE Access. 2023;11:33061-33068. [DOI] [Full Text] |

| 16. | ArulDass SD, Jayagopal P. Identifying Complex Emotions in Alexithymia Affected Adolescents Using Machine Learning Techniques. Diagnostics (Basel). 2022;12:3188. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 8] [Cited by in RCA: 2] [Article Influence: 0.5] [Reference Citation Analysis (0)] |

| 17. | Weavers B, Heron J, Thapar AK, Stephens A, Lennon J, Bevan Jones R, Eyre O, Anney RJ, Collishaw S, Thapar A, Rice F. The antecedents and outcomes of persistent and remitting adolescent depressive symptom trajectories: a longitudinal, population-based English study. Lancet Psychiatry. 2021;8:1053-1061. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 115] [Cited by in RCA: 92] [Article Influence: 18.4] [Reference Citation Analysis (0)] |

| 18. | Liang S, Huang Z, Wang Y, Wu Y, Chen Z, Zhang Y, Guo W, Zhao Z, Ford SD, Palaniyappan L, Li T. Using a longitudinal network structure to subgroup depressive symptoms among adolescents. BMC Psychol. 2024;12:46. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 3] [Cited by in RCA: 2] [Article Influence: 1.0] [Reference Citation Analysis (0)] |

| 19. | Fecke M, Fehr A, Schlütz D, Zillich AF. The Ethics of Gatekeeping: How Guarding Access Influences Digital Child and Youth Research. Media Commun. 2022;10:361-370. [DOI] [Full Text] |

| 20. | Ren Z, Zhou G, Wang Q, Xiong W, Ma J, He M, Shen Y, Fan X, Guo X, Gong P, Liu M, Yang X, Liu H, Zhang X. Associations of family relationships and negative life events with depressive symptoms among Chinese adolescents: A cross-sectional study. PLoS One. 2019;14:e0219939. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 36] [Cited by in RCA: 25] [Article Influence: 3.6] [Reference Citation Analysis (1)] |

| 21. | Lemonious T, Codner M, Pluhar E. A review on the disparities in the identification and assessment of depression in Black adolescents and young adults. How can clinicians help to close the gap? Curr Opin Pediatr. 2022;34:313-319. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 8] [Cited by in RCA: 5] [Article Influence: 1.3] [Reference Citation Analysis (0)] |

| 22. | Menne F, Dörr F, Schräder J, Tröger J, Habel U, König A, Wagels L. The voice of depression: speech features as biomarkers for major depressive disorder. BMC Psychiatry. 2024;24:794. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 53] [Cited by in RCA: 31] [Article Influence: 15.5] [Reference Citation Analysis (0)] |

| 23. | Li X, Yi X, Ye J, Zheng Y, Wang Q. SFTNet: A microexpression-based method for depression detection. Comput Methods Programs Biomed. 2024;243:107923. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 16] [Cited by in RCA: 8] [Article Influence: 4.0] [Reference Citation Analysis (0)] |

| 24. | Krithika LB, Priya GGL. Graph based feature extraction and hybrid classification approach for facial expression recognition. J Ambient Intell Humaniz Comput. 2021;12:2131-2147. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 27] [Cited by in RCA: 7] [Article Influence: 1.2] [Reference Citation Analysis (0)] |

| 25. | Guarrasi V, Bertgren A, Näslund U, Wennberg P, Soda P, Grönlund C. Beyond unimodal analysis: Multimodal ensemble learning for enhanced assessment of atherosclerotic disease progression. Comput Med Imaging Graph. 2025;124:102617. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 3] [Cited by in RCA: 2] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 26. | Smrke U, Mlakar I, Lin S, Musil B, Plohl N. Language, Speech, and Facial Expression Features for Artificial Intelligence-Based Detection of Cancer Survivors’ Depression: Scoping Meta-Review. JMIR Ment Health. 2021;8:e30439. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 25] [Cited by in RCA: 13] [Article Influence: 2.6] [Reference Citation Analysis (0)] |

| 27. | Suryaputri IY, Mubasyiroh R, Idaiani S, Indrawati L. Determinants of Depression in Indonesian Youth: Findings From a Community-based Survey. J Prev Med Public Health. 2022;55:88-97. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 24] [Cited by in RCA: 14] [Article Influence: 3.5] [Reference Citation Analysis (0)] |

| 28. | Mienye ID, Sun YX. A Survey of Ensemble Learning: Concepts, Algorithms, Applications, and Prospects. IEEE Access. 2022;10:99129-99149. [DOI] [Full Text] |

| 29. | Cheng Y, Petrides KV, Li J. Estimating the Minimum Sample Size for Neural Network Model Fitting-A Monte Carlo Simulation Study. Behav Sci (Basel). 2025;15:211. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 14] [Cited by in RCA: 9] [Article Influence: 9.0] [Reference Citation Analysis (0)] |

| 30. | Dong Y, Wen H, Lu C, Li J, Zheng Q. Predicting depression risk with machine learning models: identifying familial, personal, and dietary determinants. BMC Psychiatry. 2025;25:883. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 3] [Cited by in RCA: 1] [Article Influence: 1.0] [Reference Citation Analysis (0)] |

| 31. | Joshi G, Tasgaonkar V, Deshpande A, Desai A, Shah B, Kushawaha A, Sukumar A, Kotecha K, Kunder S, Waykole Y, Maheshwari H, Das A, Gupta S, Subudhi A, Jain P, Jain NK, Walambe R, Kotecha K. Multimodal machine learning for deception detection using behavioral and physiological data. Sci Rep. 2025;15:8943. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 19] [Cited by in RCA: 3] [Article Influence: 3.0] [Reference Citation Analysis (0)] |

| 32. | Liu Y, Yu Y, Tao H, Ye Z, Wang S, Li H, Hu D, Zhou ZZ, Zeng LL. Cognitive Load Prediction From Multimodal Physiological Signals Using Multiview Learning. IEEE J Biomed Health Inform. 2025;29:3282-3292. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 30] [Cited by in RCA: 9] [Article Influence: 9.0] [Reference Citation Analysis (0)] |