Published online Mar 28, 2026. doi: 10.3748/wjg.v32.i12.115990

Revised: November 29, 2025

Accepted: January 22, 2026

Published online: March 28, 2026

Processing time: 139 Days and 22.4 Hours

Gastrointestinal (GI) cancers are a leading cause of cancer-related death, and early diagnosis is crucial for improving patient outcomes. Traditional endoscopy, while essential, depends on the skill of endoscopists and is prone to errors. Recent ad

Core Tip: Artificial intelligence (AI)-assisted endoscopy technologies have significantly advanced early detection and diagnosis of gastrointestinal cancers, enhancing adenoma detection rates and improving clinical outcomes. Recent studies demonstrate that AI, through deep learning models, can effectively identify small lesions, reduce missed diagnoses, and assist in clinical decision-making across various gastrointestinal regions, including the esophagus, stomach, and colon. However, challenges such as data quality, model generalization, and physician-AI collaboration remain. Overcoming these issues will ensure AI’s broader clinical integration, making it a vital tool in precision medicine and early cancer screening.

- Citation: Ning ZX, Xiao JJ, Zhou ZX. Artificial intelligence-assisted endoscopy in the detection of early gastrointestinal cancer: Progress, challenges, and future directions. World J Gastroenterol 2026; 32(12): 115990

- URL: https://www.wjgnet.com/1007-9327/full/v32/i12/115990.htm

- DOI: https://dx.doi.org/10.3748/wjg.v32.i12.115990

Gastrointestinal (GI) cancers remain a major cause of cancer-related mortality worldwide, and early diagnosis is critical for effective treatment[1]. GI malignancies including those of the stomach, small intestine, esophagus, rectum, and colon pose a serious threat to global health. According to the World Health Organization, oral cancer alone caused approxi

Endoscopy, as a direct visualization method for the GI tract, plays an indispensable role in early cancer detection. For instance, colonoscopy has demonstrated high sensitivity in colorectal cancer screening. Conventional white-light en

In recent years, artificial intelligence (AI) has achieved major breakthroughs in medical imaging, particularly in image processing and pattern recognition, providing new opportunities to enhance diagnostic accuracy, speed, and automation. Deep learning, especially convolutional neural networks (CNNs), has been widely applied to medical image analysis[5]. CNNs can automatically extract hierarchical visual features from images, significantly improving computer-aided dia

A comprehensive literature search was conducted to identify studies related to the application of AI-assisted endoscopy in the early detection of GI cancers. The search was performed in databases such as PubMed, IEEE Xplore, and Google Scholar, using keywords like “AI-assisted endoscopy”, “early gastrointestinal cancer detection”, “deep learning in endoscopy”, and “gastrointestinal imaging”, combined with appropriate Boolean operators. The search was limited to articles published between 2010 and 2025, and only studies published in English were included.

The inclusion criteria were as follows: First, original research was prioritized, focusing on AI applications in GI endoscopy, particularly studies involving the early detection of cancers such as gastric cancer, colorectal cancer, eso

Exclusion criteria included studies that did not focus on AI applications in endoscopy and those lacking clinical validation or performance evaluation. Studies that did not provide sufficient clinical data or experimental evidence to support the practical application of AI algorithms were excluded. Animal studies, in vitro studies, and papers that primarily involved theoretical analysis were also excluded.

The process of literature identification and selection followed a systematic approach. After initial retrieval, studies were screened for relevance based on their subject matter, and full-text reviews were conducted to confirm compliance with the inclusion criteria. Ultimately, studies that significantly contributed to the understanding of AI’s role in early GI cancer detection were selected, ensuring that this review is both academically rigorous and effectively supports the discussions.

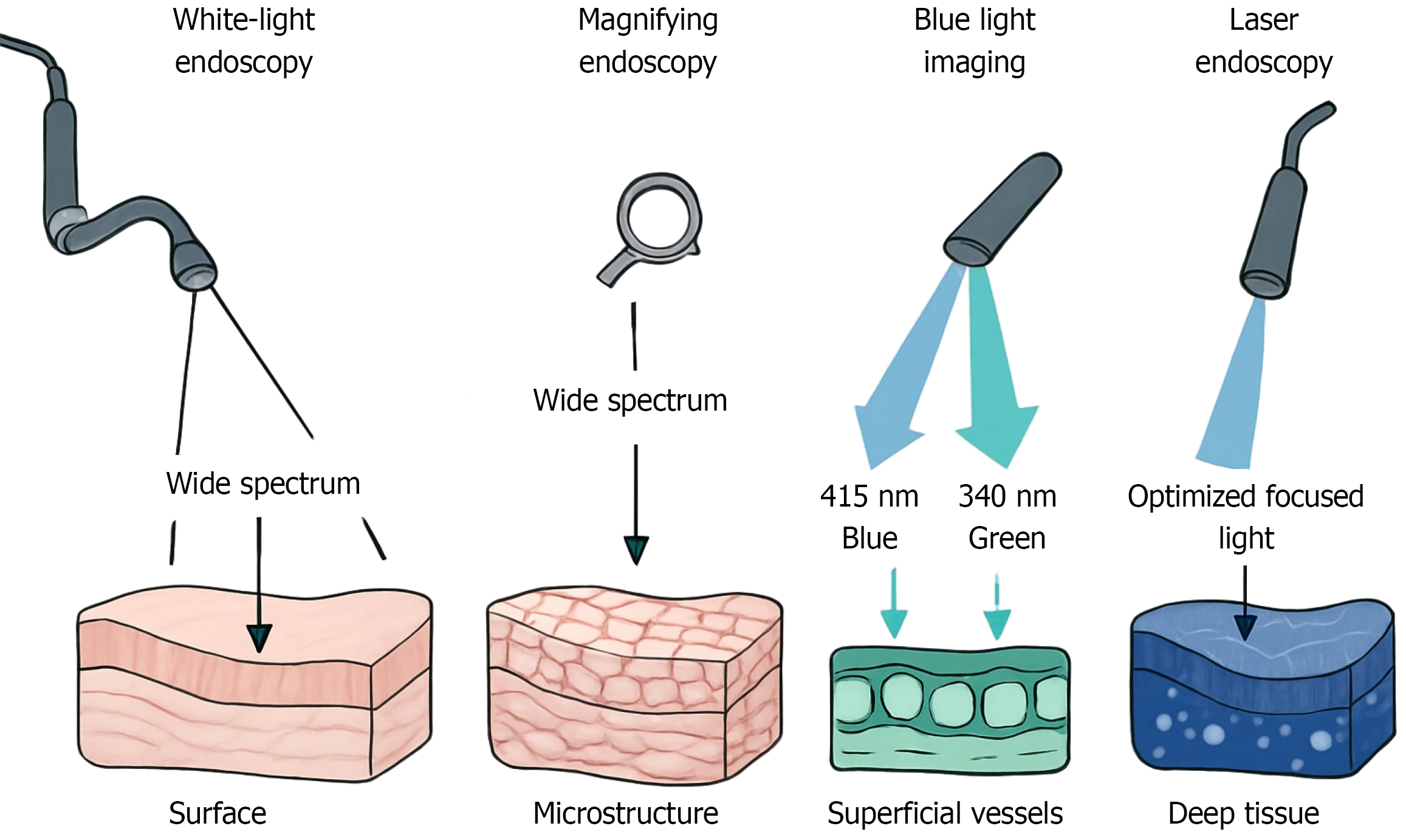

WLE remains the most commonly used method in GI examination. It operates by illuminating mucosal tissue with visible light and capturing reflected signals to produce detailed surface images. While WLE enables direct visualization of mucosal structure and color changes, its sensitivity for early lesions is limited due to minimal contrast between abnormal and surrounding normal tissues. To overcome these shortcomings, magnifying endoscopy (ME) enhances visualization by providing optical magnification of up to several dozen times. When combined with chromoendoscopy techniques using dyes such as indigo carmine or methylene blue ME allows detailed observation of mucosal microstructures and vascular patterns, thereby improving the detection of minute lesions[6]. The principle of chromoendoscopy lies in the selective absorption of dyes by different tissue components, which enhances contrast and facilitates distinction between neoplastic and non-neoplastic mucosa. In comparison, narrow-band imaging (NBI) utilizes specific narrow-band wavelengths (415 nm and 540 nm) to enhance contrast in superficial vascular networks and mucosal surface patterns. NBI has proven particularly effective in the detection of early neoplastic changes[7], and multiple clinical studies have de

Computer vision technologies form the foundation of AI-assisted endoscopy. The core function of these systems is to simulate human visual perception extracting diagnostic features from two-dimensional endoscopic images and classifying lesions.

Early CADe systems were limited by manually engineered features such as color histograms and texture descriptors, resulting in poor generalization across different clinical settings. The emergence of deep learning, and particularly CNNs, has overcome these limitations. CNNs can automatically learn hierarchical features from raw image data, eliminating the need for manual feature design and dramatically improving detection performance. Networks such as visual geometry group network, residual network, and dense convolutional network have shown excellent results in early detection of gastric and colorectal cancers, achieving diagnostic accuracy comparable to expert endoscopists (Table 1). From a pra

| Architecture name | Core innovation/structural features | Relevance in medical field |

| VGGNet | Adopts a concise structure of “stacked small convolutional kernels (3 × 3) + pooling layers”, enhancing feature extraction capability by increasing network depth | A classic model for basic feature extraction in medical images, suitable for preliminary lesion detection and medical image classification (e.g., X-ray disease screening), laying the foundation for subsequent architectures in medical AI |

| ResNet | Introduces “residual connections” (cross-layer feature transmission) to solve the gradient vanishing problem in deep network training, enabling the construction of ultra-deep networks | Significantly improves feature extraction accuracy for complex medical images, applicable to pathological section analysis and 3D medical image segmentation (e.g., tumor boundary extraction), serving as a core architecture for disease diagnosis models |

| DenseNet | Employs “dense connections” (direct feature sharing across all layers) to enhance feature propagation efficiency and reduce parameter redundancy | Excels in fine-grained analysis of medical images, such as micro-lesion recognition and multi-modal medical image fusion (e.g., combining CT and MRI images), demonstrating distinct advantages in precision medical diagnosis |

The technological advancements in AI-assisted endoscopic diagnosis are mainly reflected across several dimensions, including image preprocessing and enhancement, CADe systems, and CADx systems. Through deep learning models, these technologies have significantly improved the efficiency and accuracy of endoscopic diagnosis. In terms of image preprocessing and enhancement, deep learning models effectively address the limitations of traditional endoscopic image quality. For instance, image denoising and dehazing models can remove artifacts caused by uneven illumination or mu

Early detection of oral lesions: Oral cancer, particularly oral squamous cell carcinoma (OSCC), is a major component of GI tumors, with great clinical significance for early screening and prevention. OSCC is the most common subtype of oral cancer, and its global incidence continues to rise[6]. Due to the anatomical complexity of the oral cavity, lesions often remain hidden in regions such as the base of the tongue, buccal mucosa, and palate. Conventional visual examination has limited sensitivity for early detection, leading to a considerable proportion of patients being diagnosed at middle or ad

Recent advancements in AI technologies have opened new possibilities for the early diagnosis and screening of oral cancer[7,13]. Deep learning-based oral endoscopic image recognition systems have matured substantially in recent years. These systems can automatically identify suspicious lesion areas under both WLE and NBI modes, significantly im

A recent systematic review and meta-analysis indicated that NBI has high diagnostic accuracy in evaluating the malignant transformation of oral potentially malignant disorders, particularly when applying the intrapapillary capillary loop classification system[19]. NBI-guided surgical resection of oral cancer has also been reported to reduce local re

Most studies in this section are retrospective and observational, with relatively small sample sizes. The use of different AI algorithms and imaging devices across studies introduces some variability in results. While the majority of studies report positive findings, the heterogeneity in datasets and equipment limits the generalizability of the conclusions. Fur

Early detection of pharyngeal lesions: The pharynx, as the intersection of the digestive and respiratory tracts, holds significant clinical importance in the early screening of GI tumors[25]. Pharyngeal cancers, particularly laryngeal and hypopharyngeal carcinomas, often present with nonspecific early symptoms such as mild sore throat, foreign-body sensation, or hoarseness that can easily be overlooked by both patients and clinicians. Consequently, most cases are diagnosed at advanced stages, severely impacting prognosis and survival outcomes[26].

In recent years, AI has gained growing attention in the early diagnosis of pharyngeal cancers. Researchers have integrated acoustic signals and endoscopic image data to develop multimodal diagnostic models capable of capturing correlations between vocal changes and mucosal abnormalities[27]. Additionally, deep learning-based video stream analysis has introduced new approaches for real-time lesion recognition, enabling automatic segmentation and loca

AI-assisted diagnostic systems that integrate multimodal data including laryngoscopic images, NBI, white-light images, endoscopic biopsy images, and patient voice characteristics have demonstrated high diagnostic performance for detecting laryngopharyngeal cancers. One such system achieved 90.0% sensitivity, 92.3% specificity, and 91.7% overall accuracy[25]. AI models have shown remarkable results, including achieving 93.3% sensitivity for detecting hypo

The research on AI in pharyngeal cancer detection is still in its early stages, with many studies being pilot projects. These studies tend to have small sample sizes and limited follow-up. While AI models show high diagnostic perfor

Early detection of esophageal cancer: Esophageal cancer, including both esophageal squamous cell carcinoma and adenocarcinoma, remains a major global health burden with high incidence and mortality rates[32]. Early diagnosis is crucial to improving patient prognosis, but early-stage esophageal lesions often present with subtle and nonspecific endoscopic findings, such as mild mucosal irregularities, faint erythema, or slight discoloration, that are easily over

AI-based recognition technologies utilizing NBI and ME have advanced rapidly in recent years. AI systems can automatically analyze microvascular morphology and mucosal surface patterns in endoscopic images, assisting clinicians in accurately identifying early neoplastic lesions[33,34]. Deep learning models like YOLOv5 and RetinaNet have been employed to develop algorithms that combine white-light and NBI data, achieving accurate early diagnosis of esophageal cancer[35].

AI systems have significantly improved diagnostic accuracy for early esophageal cancer and precancerous lesions, especially in Barrett’s esophagus and esophageal adenocarcinoma[36]. Integration of AI with endoscopic ultrasonography has also enhanced the accuracy of predicting tumor invasion depth, which aids in clinical staging and treatment decisions[37]. AI-assisted multi-omics analysis, encompassing genomics, proteomics, and radiomics, helps in personalized treatment planning[30,31]. Countries such as Japan and Germany have already developed and clinically validated AI-assisted systems for early esophageal cancer detection[33].

The studies on AI in esophageal cancer detection are mostly of medium quality, with several studies being observational and lacking randomization. Sample sizes are generally moderate, and there is some heterogeneity in the AI models used. Although AI has shown strong potential in detecting early lesions, further validation with larger and more diverse datasets is needed, particularly for the integration of multi-omics data.

Early detection of gastric cancer: Gastric cancer remains a significant global health challenge, particularly in East Asia, due to its high incidence and mortality rates[38]. Early detection and timely treatment are critical for improving patient outcomes. However, traditional gastroscopy faces significant challenges due to the stomach’s complex anatomy, wide examination field, and variations in operator experience[39].

AI, particularly deep learning algorithms like CNNs, has shown exceptional performance in real-time analysis of gastroscopic videos. AI can automate feature extraction and lesion identification, assisting physicians in detecting suspi

AI-assisted gastroscopy has been shown to achieve significantly higher sensitivity for early gastric cancer detection compared to general endoscopists[43]. In large-scale clinical trials, AI systems not only improved diagnostic accuracy but also reduced lesion recognition time, highlighting their potential in clinical practice[44]. AI applications also aid in opti

AI-assisted gastroscopy studies mostly rely on retrospective analyses and single-center data. The sample sizes are often small, which may limit the robustness of the findings. There is also variability in the AI models used across studies. While the studies generally show improvements in diagnostic accuracy, the lack of standardized protocols and equipment differences across centers requires more rigorous testing in multi-center trials to confirm these results.

Early detection of small intestinal lesions: Small bowel malignancies, although relatively rare, hold significant clinical importance, especially for evaluating small intestinal polyps, vascular malformations, Crohn’s disease, and small bowel bleeding. Traditional imaging methods have limitations due to the length and anatomical location of the small intestine[46,47].

AI has demonstrated substantial potential in analyzing contrast-enhanced (CE) images. Deep learning algorithms rapidly process large CE datasets to automatically detect and localize lesions, such as bleeding sites, ulcers, polyps, and tumors[48]. This automation reduces physician workload and enhances detection accuracy[49]. Additionally, AI models are increasingly being applied to assess small intestinal motility and evaluate conditions like Crohn’s disease[49,50].

AI-assisted capsule endoscopy systems have been proven to perform at levels comparable to, or even surpassing, experienced clinicians in detecting small bowel lesions[51]. AI tools for inflammatory bowel disease have already imp

The majority of studies on AI in small bowel cancer detection use CE data, with moderate sample sizes. The effectiveness of AI varies depending on the algorithms and imaging technologies used. While AI has demonstrated high accuracy in detecting lesions, the studies often rely on retrospective data, and more prospective studies with larger sample sizes are needed to validate the performance of AI-assisted CE systems in real-world settings.

Early detection of colorectal lesions: Colorectal cancer is the second leading cause of cancer-related deaths worldwide, and early identification of precancerous lesions is crucial to reducing colorectal cancer incidence and mortality. However, conventional colonoscopy faces challenges in detecting flat adenomas, which are often overlooked by endoscopists[54,55].

AI-driven CADe systems have been developed to analyze real-time colonoscopy video feeds, automatically identifying suspicious lesions and providing visual cues to endoscopists. These systems significantly improve ADR, particularly for small (< 5 mm) adenomas and serrated lesions[10,56].

AI-assisted colonoscopy has been shown to increase ADR from approximately 35% to 43%, with multiple studies confirming its effectiveness in enhancing adenoma detection[10]. Additionally, AI models help with lesion characteri

AI-based CADe systems for colorectal cancer are well-supported by clinical studies, although many studies still rely on retrospective data. The studies show a significant improvement in ADR, but sample sizes are often small, and there is variability in the quality of colonoscopy equipment used. The evidence is promising, but further large-scale, multi-center studies are necessary to fully assess the potential of AI in routine clinical practice.

Early detection of anal canal lesions: Diseases of the anal canal, particularly anal squamous cell carcinoma, are often misdiagnosed as hemorrhoids, leading to delayed treatment and poorer prognoses[57]. The diagnostic accuracy of tra

AI applications in high-resolution anoscopy (HRA) have demonstrated potential for improving the accuracy of early detection of anal canal lesions. Deep learning models, such as CNNs, are particularly effective for lesion detection and classification[58].

AI-based predictive models have integrated imaging features with human papillomavirus infection status to enhance the accuracy of anal canal cancer risk prediction[59]. AI models have shown promising results in differentiating between high-grade squamous intraepithelial lesions and other conditions, significantly improving early detection and reducing misdiagnosis[59].

The research on AI in anal canal cancer detection is limited, with most studies focusing on HRA data. Sample sizes are often small, and there is considerable variability in imaging techniques and AI algorithms used. Despite positive results, the evidence is still preliminary, and more extensive clinical trials are needed to confirm the diagnostic accuracy of AI models in detecting anal canal lesions, particularly in high-risk populations.

With the rapid advancement of AI-assisted endoscopic technologies, an increasing number of studies are promoting the transition of AI systems from laboratory algorithm validation to real-world clinical practice. Accordingly, clinical research and validation efforts now focus on evaluating the diagnostic efficacy, clinical feasibility, operational convenience, and impact on patient outcomes of AI systems during actual endoscopic examinations.

In terms of clinical trial design and methodology, existing studies can generally be categorized into two types. The first type is retrospective studies, which utilize previously collected endoscopic images or video data to validate AI algo

The second type is prospective randomized controlled trials (RCTs), in which patients are randomly assigned to receive either AI-assisted endoscopy or conventional endoscopic examination. The diagnostic efficacy of AI is then verified by comparing parameters such as ADR, early cancer detection rate, and procedure time between the two groups. For instance, numerous multicenter RCTs have confirmed that AI can significantly improve ADR in colorectal screening.

Across different GI regions, AI has demonstrated strong clinical performance. In the esophagus, AI systems have shown outstanding results in detecting Barrett’s esophagus and early esophageal squamous cell carcinoma, with dia

In real-world clinical workflows, the value of AI extends beyond merely improving lesion detection rates it also positively influences operator behavior and decision-making. On one hand, AI can highlight suspicious regions in real time within endoscopic video streams, prompting operators to slow down and inspect more carefully. On the other hand, AI alert systems can effectively reduce missed lesions when endoscopists experience fatigue or lapses in attention. Fur

From the perspective of clinical validation outcomes and ongoing challenges, the results are highly encouraging. Mul

| System name | Target site and function | Key performance metrics | Validation status and characteristics |

| Deep learning-based endoscopy systems | Esophagus, stomach: Early cancer detection and diagnosis | Sensitivity for early gastric cancer > 90%, specificity > 80% | Mostly in clinical research phase: Validation often involves single-center or retrospective studies; demonstrates potential to match or surpass human experts in specific tasks |

| Detection accuracy for early esophageal cancer comparable to expert endoscopists | |||

| AI-assisted capsule endoscopy systems | Small bowel: Automatic detection of ulcers, bleeding, polyps, etc. | Sensitivity for small bowel lesions > 95%, specificity > 90% | Validated by multicenter prospective studies: Some systems have received regulatory approval and are in clinical use; aims to address the inefficiency of analyzing large CE image volumes |

| Significantly increases reading speed, reducing physician workload by > 70% | |||

| CADe colonoscopy systems | Colorectum: Real-time polyp detection (CADe) | Increases adenoma detection rate by an absolute 5%-10% | Some systems approved by FDA, CE, NMPA: Supported by the highest level of evidence (multicenter RCTs); integrated into commercial endoscopy platforms; value is pronounced in community practice settings |

| Particularly effective for detecting small polyps (< 5 mm) and flat adenomas | |||

| CADx colonoscopy systems | Colorectum: Real-time polyp characterization (CADx) | Accuracy for optical diagnosis of adenomatous polyps > 90% | Some features approved and commercialized: Integrated with CADe systems; aims to provide “see-and-diagnose” capability, reducing unnecessary polypectomies and screening costs |

| Enables reliable “diagnose-and-leave” or “resect-and-discard” strategies with > 90% confidence | |||

| AI-assisted laryngoscopy/pharyngeal diagnosis systems | Pharynx, larynx: Early cancer detection | Sensitivity for laryngopharyngeal cancer 90%-93%, specificity > 92% | Primarily in prospective research or pilot project phase: Sample sizes are relatively small, but shows great promise for multimodal AI in complex anatomical sites |

| Capable of multimodal analysis integrating voice signals and images | |||

| AI-assisted high-resolution anoscopy | Anal canal: Detection of HSIL | Shows high accuracy in differentiating HSIL from other conditions | Research is very preliminary and exploratory: Limited sample sizes; a promising tool for screening specific high-risk populations but requires further validation |

| Can integrate HPV status for risk prediction |

AI-assisted endoscopic technologies have achieved remarkable progress in the screening of early GI cancers, yet several significant challenges remain. First, there are considerable performance variations among different AI systems, which hinder their global standardization and generalizability.

Data quality and annotation represent another major challenge. AI models rely heavily on high-quality annotated datasets; however, the complexity of endoscopic imagery often leads to inconsistent or inaccurate labeling, directly affecting model training performance. The subjectivity of annotation further exacerbates fluctuations in data quality, reducing reproducibility and reliability across studies.

Additionally, the generalization capability of AI models remains insufficient. Models trained on data from a specific hospital, device, or population often perform inconsistently in different clinical settings, particularly in resource-limited regions. This highlights the urgent need for cross-regional, multicenter datasets to improve model robustness and stability under diverse conditions.

The collaborative integration of AI and physicians also poses an ongoing challenge. Although AI can assist diagnosis, enhancing both efficiency and accuracy, achieving an optimal balance between AI’s automated analysis and clinicians’ experiential judgment remains unresolved. Overreliance on AI may risk diminishing physician autonomy and critical thinking.

Regulatory approval pathways are another critical hurdle for AI-assisted endoscopy. In many regions, AI technologies must undergo rigorous validation and approval processes to meet regulatory standards, which can be time-consuming and costly. Moreover, differences in regulatory frameworks across countries can delay the global adoption of AI systems. The regulatory landscape must evolve to create clear, standardized pathways for AI in medical devices to facilitate their faster and more widespread implementation.

Medical legal considerations also pose challenges for AI integration in clinical practice. The use of AI for diagnostic support raises important legal questions regarding liability and accountability. In the event of a misdiagnosis or treatment error, it is unclear whether the physician, the AI system developer, or the healthcare institution is responsible. Clear guidelines and regulations are needed to address these concerns and ensure that AI applications are legally and ethically sound in clinical environments.

Explainability and interpretability of AI models is an ongoing issue. Deep learning models, while powerful, are often criticized for their “black-box” nature, meaning their decision-making processes are not transparent or easily understood by clinicians. This lack of explainability can reduce physician trust in AI systems, particularly when critical decisions are involved. There is an urgent need for AI systems that not only perform well but also provide clinicians with clear, interpretable explanations of how conclusions were reached, ensuring that physicians can confidently incorporate AI into their decision-making process.

Clinical workflow integration and implementation barriers also pose significant challenges. AI-assisted endoscopy must seamlessly integrate into existing clinical workflows, ensuring that the technology enhances efficiency without dis

In summary, AI-assisted endoscopy for early GI cancer screening faces multiple challenges, including data quality, model generalization, device compatibility, regulatory approval, legal and ethical considerations, explainability, clinical workflow integration, and human AI collaboration frameworks. Addressing these issues will be essential for enabling AI to play a more integral role in clinical endoscopy, ultimately providing more precise and reliable support for the early detection and diagnosis of GI cancers.

Enhancing data quality and annotation consistency: To overcome the data quality and annotation challenges in AI-assisted endoscopy, a multi-faceted approach is needed. First, the establishment of standardized annotation guidelines is essential to reduce subjectivity and ensure consistent data labeling across institutions. This could involve collaboration between international expert panels to define common protocols for annotating endoscopic images. In addition, leveraging advanced AI-based pre-annotation tools, followed by expert validation, can significantly streamline the process while minimizing human errors. Moreover, creating large, diverse annotated datasets through multi-center collaborations will help improve the robustness of AI models, ensuring their ability to generalize across various clinical settings and populations. Examples such as the publicly available GI-pathology dataset and other large-scale collaborations can serve as models for future endeavors.

Fostering multi-center collaborations for model generalization: To address the issue of model generalization, particularly in resource-limited settings, it is crucial to promote large-scale multi-center data sharing and collaboration. This would enable AI models to be trained on more diverse datasets, improving their accuracy and applicability across different geographic regions and healthcare systems. The establishment of international research consortia dedicated to AI in medical imaging, similar to efforts like the Radiological Society of North America’s AI initiatives, would accelerate the creation of robust datasets that can better handle variations in patient populations, imaging devices, and clinical workflows. By incorporating data from a wide range of hospitals, regions, and demographics, these models can be more readily adapted to local conditions, ensuring that AI tools can provide equitable healthcare solutions across the globe.

Advancing explainability and human-AI collaboration: To improve the trust and adoption of AI systems in clinical practice, efforts should be directed at enhancing model explainability and fostering better collaboration between AI systems and clinicians. AI models should not only provide accurate diagnostic support but also offer transparent reasoning for their predictions, thereby enabling clinicians to understand and interpret AI-generated recommendations. Developing “explainable AI” frameworks that clarify how the AI arrives at specific conclusions would help bridge the gap between machine output and human decision-making. Furthermore, the integration of AI should not be viewed as a replacement for clinicians, but as a tool to augment their expertise. Efforts should focus on creating workflows that enable AI to assist without undermining physician autonomy. This could involve designing user-friendly interfaces.

Multimodal data fusion is a key direction for the future development of AI-assisted endoscopy technologies. The goal is to integrate endoscopic images, pathological images, genomic data, and clinical information to enable precise screening of early GI cancers. AI-driven liquid biopsy techniques, utilizing biomarkers such as circulating tumor DNA, circulating tumor cells, and exosomal RNA, offer non-invasive diagnostic options while emphasizing AI’s role in processing complex data and enhancing detection sensitivity. This cross-modal data integration significantly improves the accuracy and sensitivity of AI in GI endoscopic screening and supports the development of personalized treatment plans.

Real-time intelligent assistance is another important focus for the future of AI-assisted endoscopy. AI models have already been deployed for real-time processing on edge computing devices, enabling the instant detection of gastric neoplasms during endoscopic procedures with high classification accuracy and low inference latency. This real-time feedback system reduces dependency on cloud servers, ensuring data privacy and security, and improving diagnostic efficiency[60]. Future development will focus on algorithm optimization to enhance recognition speed and accuracy, as well as expanding real-time diagnostic capabilities to more GI diseases.

The integration of AI with augmented reality (AR) technology will revolutionize endoscopic procedures. AR navigation systems have been explored in GI endoscopy and laparoscopy, where AR elements are overlaid on real-time endoscopic images to provide structural localization and lesion guidance, enhancing the intuitiveness and safety of the procedure[61]. Although the use of AR in GI endoscopy is still in its early stages, its combination with AI holds the potential to become the core of the next-generation intelligent endoscopic navigation systems. Future research will focus on developing more precise AR navigation algorithms and utilizing AI for real-time image analysis to offer more comprehensive procedural guidance to physicians.

In the area of personalized and precision medicine, AI-assisted endoscopy technologies are expected to integrate patient genomic data, family history, and other clinical indicators to create customized screening and treatment plans for each patient, thereby improving diagnostic accuracy and reducing unnecessary tests and interventions. In pathological diagnostics, multimodal visual-language AI-assisted systems, combining pathological images with natural language interaction, have been developed to enhance diagnostic efficiency and educational outcomes. Future directions will involve developing more sophisticated AI models that analyze multiple data types to provide personalized treatment recommendations for clinicians.

In broader medical fields, multimodal AI has become a growing trend. A significant body of research has explored the application of deep learning in integrating multiple medical modality data, showing that multi-source data integration can greatly improve overall model performance. These studies provide important insights for the development of future AI-assisted endoscopy systems.

This review has summarized the latest advancements of AI in the field of early GI cancer detection, spanning from the oral cavity to the pharynx, esophagus, stomach, small intestine, and colon, significantly advancing the progress of early screening, diagnosis, and precision treatment of GI cancers. The focus has been on the potential of AI technologies to enhance the accuracy of early cancer screening, reduce missed diagnoses, and optimize clinical decision-making. In recent years, several prospective, multicenter clinical studies have demonstrated that AI-assisted endoscopic systems based on deep learning can significantly improve ADR and the ability to identify small lesions. The introduction of AI not only enhances screening efficiency but also reduces the risk of missed or incorrect diagnoses in real-world clinical settings. However, AI-assisted endoscopy still faces several constraints in its widespread clinical application. Firstly, data quality and annotation consistency remain prominent issues. Most current AI models are trained on images collected from single-center and homogeneous devices, and their generalization ability may significantly decrease when applied across different regions and devices. Secondly, the performance variability of AI systems across different populations and disease spectra requires further evidence, which will have a key impact on technical standardization and regulatory approval. Additionally, the collaborative model between doctors and AI is still not fully matured. While AI can provide lesion annotations and preliminary diagnostic suggestions during real-time endoscopy, physician experience and comprehensive judgment remain irreplaceable in interpreting complex lesions and formulating treatment strategies. Balancing efficiency improvement with clinical decision safety and accuracy will be a crucial topic for future development. Looking ahead, the development of AI in GI early cancer screening will focus on three key directions: First, further enhancing model generalization by introducing large-scale datasets from multiple centers, devices, and demographics to reduce performance variability. Second, integrating multimodal information, including endoscopic images, histopathological data, genomic and microbiome data, to achieve more precise personalized risk assessments and screening strategies (despite potential uncertainties). Third, accelerating the clinical translation from laboratory validation to real-world applications, ensuring rapid deployment of AI systems in diverse healthcare systems. Predictions suggest that with the continued advancement of standardized data sharing, algorithm optimization, and clinical evidence-based research, AI-assisted endoscopy may become an increasingly important tool for early GI cancer screening in the coming years. In conclusion, despite the existing technical and clinical challenges, the ongoing evolution of AI, coupled with multidisciplinary integration, will drive the comprehensive realization of precision medicine and personalized screening in the field of GI early cancer detection.

| 1. | Li S, Xu M, Meng Y, Sun H, Zhang T, Yang H, Li Y, Ma X. The application of the combination between artificial intelligence and endoscopy in gastrointestinal tumors. MedComm Oncol. 2024;3:e91. [RCA] [DOI] [Full Text] [Cited by in RCA: 2] [Reference Citation Analysis (0)] |

| 2. | Yaduvanshi V, Murugan R, Goel T. Automatic oral cancer detection and classification using modified local texture descriptor and machine learning algorithms. Multimed Tools Appl. 2024;84:1031-1055. [DOI] [Full Text] |

| 3. | Zhao Q, Chi T. Deep learning model can improve the diagnosis rate of endoscopic chronic atrophic gastritis: a prospective cohort study. BMC Gastroenterol. 2022;22:133. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 24] [Cited by in RCA: 21] [Article Influence: 5.3] [Reference Citation Analysis (0)] |

| 4. | Kim YH, Kim GH, Kim KB, Lee MW, Lee BE, Baek DH, Kim DH, Park JC. Application of A Convolutional Neural Network in The Diagnosis of Gastric Mesenchymal Tumors on Endoscopic Ultrasonography Images. J Clin Med. 2020;9:3162. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 43] [Cited by in RCA: 41] [Article Influence: 6.8] [Reference Citation Analysis (0)] |

| 5. | Ma H, Tian R, Li H, Sun H, Lu G, Liu R, Wang Z. Fus2Net: a novel Convolutional Neural Network for classification of benign and malignant breast tumor in ultrasound images. Biomed Eng Online. 2021;20:112. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 13] [Cited by in RCA: 12] [Article Influence: 2.4] [Reference Citation Analysis (0)] |

| 6. | Badwelan M, Muaddi H, Ahmed A, Lee KT, Tran SD. Oral Squamous Cell Carcinoma and Concomitant Primary Tumors, What Do We Know? A Review of the Literature. Curr Oncol. 2023;30:3721-3734. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 142] [Reference Citation Analysis (0)] |

| 7. | Saravanan S, Babu NA, T L, Dharmalingam Jothinathan MK. Leveraging advanced technologies for early detection and diagnosis of oral cancer: Warning alarm. Oral Oncol Rep. 2024;10:100260. [DOI] [Full Text] |

| 8. | Ali S. Where do we stand in AI for endoscopic image analysis? Deciphering gaps and future directions. NPJ Digit Med. 2022;5:184. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 20] [Cited by in RCA: 33] [Article Influence: 8.3] [Reference Citation Analysis (4)] |

| 9. | Pang X, Zhao Z, Wu Y, Chen Y, Liu J. Computer-aided diagnosis system based on multi-scale feature fusion for screening large-scale gastrointestinal diseases. J Comput Des Eng. 2023;10:368-381. [DOI] [Full Text] |

| 10. | Thiruvengadam NR, Solaimani P, Shrestha M, Buller S, Carson R, Reyes-Garcia B, Gnass RD, Wang B, Albasha N, Leonor P, Saumoy M, Coimbra R, Tabuenca A, Srikureja W, Serrao S. The Efficacy of Real-time Computer-aided Detection of Colonic Neoplasia in Community Practice: A Pragmatic Randomized Controlled Trial. Clin Gastroenterol Hepatol. 2024;22:2221-2230.e15. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 21] [Cited by in RCA: 16] [Article Influence: 8.0] [Reference Citation Analysis (0)] |

| 11. | Singh G, Kamalja A, Patil R, Karwa A, Tripathi A, Chavan P. A comprehensive assessment of artificial intelligence applications for cancer diagnosis. Artif Intell Rev. 2024;57:179. [DOI] [Full Text] |

| 12. | Olivo M, Bhuvaneswari R, Keogh I. Advances in bio-optical imaging for the diagnosis of early oral cancer. Pharmaceutics. 2011;3:354-378. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 31] [Cited by in RCA: 29] [Article Influence: 1.9] [Reference Citation Analysis (0)] |

| 13. | Hoda N, Moza A, Byadgi AA, Sabitha KS. Artificial intelligence based assessment and application of imaging techniques for early diagnosis in oral cancers. Int Surg J. 2024;11:318-322. [DOI] [Full Text] |

| 14. | Chaudhary N, Rai A, Rao AM, Faizan MI, Augustine J, Chaurasia A, Mishra D, Chandra A, Chauhan V, Ahmad T. High-resolution AI image dataset for diagnosing oral submucous fibrosis and squamous cell carcinoma. Sci Data. 2024;11:1050. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 12] [Cited by in RCA: 10] [Article Influence: 5.0] [Reference Citation Analysis (0)] |

| 15. | Deo BS, Pal M, Panigrahi PK, Pradhan A. Supremacy of attention-based transformer in oral cancer classification using histopathology images. Int J Data Sci Anal. 2025;20:969-987. [DOI] [Full Text] |

| 16. | Chand S, Namasivayam K, Dave J, Preejith SP, Jayachandran S, Sivaprakasam M. In-vivo non-contact multispectral oral disease image dataset with segmentation. Sci Data. 2024;11:1298. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 3] [Cited by in RCA: 3] [Article Influence: 1.5] [Reference Citation Analysis (0)] |

| 17. | Chidambaram K, Kumar Parida P, Mittal Y, Chappity P, Kumar Samal D, Pradhan P, Sarkar S, Kumar Adhya A. Correlation of Narrow Band Imaging Patterns with Histopathology Reports in Head and Neck Lesions. Indian J Otolaryngol Head Neck Surg. 2024;76:4171-4178. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 1] [Reference Citation Analysis (0)] |

| 18. | Shibahara T, Yamamoto N, Yakushiji T, Nomura T, Sekine R, Muramatsu K, Ohata H. Narrow-band imaging system with magnifying endoscopy for early oral cancer. Bull Tokyo Dent Coll. 2014;55:87-94. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 10] [Cited by in RCA: 12] [Article Influence: 1.2] [Reference Citation Analysis (0)] |

| 19. | Zhang Y, Wu Y, Pan D, Zhang Z, Jiang L, Feng X, Jiang Y, Luo X, Chen Q. Accuracy of narrow band imaging for detecting the malignant transformation of oral potentially malignant disorders: A systematic review and meta-analysis. Front Surg. 2022;9:1068256. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 9] [Reference Citation Analysis (0)] |

| 20. | Farah CS. Narrow Band Imaging-guided resection of oral cavity cancer decreases local recurrence and increases survival. Oral Dis. 2018;24:89-97. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 28] [Cited by in RCA: 32] [Article Influence: 4.0] [Reference Citation Analysis (0)] |

| 21. | Jerjes W, Stevenson H, Ramsay D, Hamdoon Z. Enhancing Oral Cancer Detection: A Systematic Review of the Diagnostic Accuracy and Future Integration of Optical Coherence Tomography with Artificial Intelligence. J Clin Med. 2024;13:5822. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 13] [Reference Citation Analysis (0)] |

| 22. | Niu H, Zhou Y, Yan X, Wu J, Shen Y, Yi Z, Hu J. On the applications of neural ordinary differential equations in medical image analysis. Artif Intell Rev. 2024;57:236. [DOI] [Full Text] |

| 23. | Yang Z, Shang J, Liu C, Zhang J, Liang Y. Identification of oral cancer in OCT images based on an optical attenuation model. Lasers Med Sci. 2020;35:1999-2007. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 10] [Cited by in RCA: 20] [Article Influence: 3.3] [Reference Citation Analysis (0)] |

| 24. | Yang Z, Pan H, Shang J, Zhang J, Liang Y. Deep-Learning-Based Automated Identification and Visualization of Oral Cancer in Optical Coherence Tomography Images. Biomedicines. 2023;11:802. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 26] [Reference Citation Analysis (1)] |

| 25. | Li Y, Gu W, Yue H, Lei G, Guo W, Wen Y, Tang H, Luo X, Tu W, Ye J, Hong R, Cai Q, Gu Q, Liu T, Miao B, Wang R, Ren J, Lei W. Real-time detection of laryngopharyngeal cancer using an artificial intelligence-assisted system with multimodal data. J Transl Med. 2023;21:698. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 16] [Reference Citation Analysis (0)] |

| 26. | Nakajo K, Inaba A, Aoyama N, Takashima K, Kadota T, Yoda Y, Morishita Y, Okano W, Tomioka T, Shinozaki T, Matsuura K, Hayashi R, Akimoto T, Yano T. The characteristics of missed pharyngeal and laryngeal cancers at gastrointestinal endoscopy. Jpn J Clin Oncol. 2022;52:575-582. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 6] [Cited by in RCA: 6] [Article Influence: 1.5] [Reference Citation Analysis (0)] |

| 27. | Kim HB, Song J, Park S, Lee YO. Classification of laryngeal diseases including laryngeal cancer, benign mucosal disease, and vocal cord paralysis by artificial intelligence using voice analysis. Sci Rep. 2024;14:9297. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 6] [Reference Citation Analysis (0)] |

| 28. | Renjith VR, Judith JE. A Review on Explainable Artificial Intelligence for Gastrointestinal Cancer using Deep Learning. Proceedings of the 2023 Annual International Conference on Emerging Research Areas: International Conference on Intelligent Systems (AICERA/ICIS); 2023 Nov 16-18; Kanjirapally, India. IEEE, 2023: 1-6. |

| 29. | Kaur P, Chand T, Rani S. Integration of Artificial Intelligence in Laryngeal Cancer Diagnosis and Prognosis: A Comparative Analysis Bridging Traditional Medical Practices with Modern Computational Techniques. Arch Computat Methods Eng. 2025;. [DOI] [Full Text] |

| 30. | Yang Z, Guan F, Bronk L, Zhao L. Multi-omics approaches for biomarker discovery in predicting the response of esophageal cancer to neoadjuvant therapy: A multidimensional perspective. Pharmacol Ther. 2024;254:108591. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 35] [Reference Citation Analysis (0)] |

| 31. | Aziz MA. Multiomics approach towards characterization of tumor cell plasticity and its significance in precision and personalized medicine. Cancer Metastasis Rev. 2024;43:1549-1559. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 5] [Reference Citation Analysis (0)] |

| 32. | Ebigbo A, Messmann H, Lee SH. Artificial Intelligence Applications in Image-Based Diagnosis of Early Esophageal and Gastric Neoplasms. Gastroenterology. 2025;169:396-415.e2. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 22] [Cited by in RCA: 19] [Article Influence: 19.0] [Reference Citation Analysis (0)] |

| 33. | Horiuchi Y, Hirasawa T, Fujisaki J. Application of artificial intelligence for diagnosis of early gastric cancer based on magnifying endoscopy with narrow-band imaging. Clin Endosc. 2024;57:11-17. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 14] [Cited by in RCA: 13] [Article Influence: 6.5] [Reference Citation Analysis (0)] |

| 34. | Ueyama H, Kato Y, Akazawa Y, Yatagai N, Komori H, Takeda T, Matsumoto K, Ueda K, Matsumoto K, Hojo M, Yao T, Nagahara A, Tada T. Application of artificial intelligence using a convolutional neural network for diagnosis of early gastric cancer based on magnifying endoscopy with narrow-band imaging. J Gastroenterol Hepatol. 2021;36:482-489. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 129] [Cited by in RCA: 101] [Article Influence: 20.2] [Reference Citation Analysis (0)] |

| 35. | Baik YS, Lee H, Kim YJ, Chung JW, Kim KG. Early detection of esophageal cancer: Evaluating AI algorithms with multi-institutional narrowband and white-light imaging data. PLoS One. 2025;20:e0321092. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 8] [Cited by in RCA: 7] [Article Influence: 7.0] [Reference Citation Analysis (0)] |

| 36. | Ishihara R, Muto M. Current status of endoscopic detection, characterization and staging of superficial esophageal squamous cell carcinoma. Jpn J Clin Oncol. 2022;52:799-805. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 6] [Reference Citation Analysis (0)] |

| 37. | Suzuki Y, Nomura K, Kikuchi D, Iizuka T, Koseki M, Kawai Y, Okamura T, Ochiai Y, Hayasaka J, Mitsunaga Y, Odagiri H, Yamashita S, Matsui A, Ohashi K, Hoteya S. Diagnostic Performance of Endoscopic Ultrasonography with Water-Filled Balloon Method for Superficial Esophageal Squamous Cell Carcinoma. Dig Dis Sci. 2023;68:3974-3984. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 2] [Cited by in RCA: 2] [Article Influence: 0.7] [Reference Citation Analysis (0)] |

| 38. | Wang Z, Liu Y, Niu X. Application of artificial intelligence for improving early detection and prediction of therapeutic outcomes for gastric cancer in the era of precision oncology. Semin Cancer Biol. 2023;93:83-96. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 70] [Cited by in RCA: 49] [Article Influence: 16.3] [Reference Citation Analysis (0)] |

| 39. | Jin Z, Gan T, Wang P, Fu Z, Zhang C, Yan Q, Zheng X, Liang X, Ye X. Deep learning for gastroscopic images: computer-aided techniques for clinicians. Biomed Eng Online. 2022;21:12. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 15] [Cited by in RCA: 17] [Article Influence: 4.3] [Reference Citation Analysis (0)] |

| 40. | Zhou C, Dai P, Hou A, Zhang Z, Liu L, Li A, Wang F. A comprehensive review of deep learning-based models for heart disease prediction. Artif Intell Rev. 2024;57:263. [DOI] [Full Text] |

| 41. | Klang E, Sourosh A, Nadkarni GN, Sharif K, Lahat A. Deep Learning and Gastric Cancer: Systematic Review of AI-Assisted Endoscopy. Diagnostics (Basel). 2023;13:3613. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 29] [Cited by in RCA: 20] [Article Influence: 6.7] [Reference Citation Analysis (0)] |

| 42. | Hirasawa T, Ikenoyama Y, Ishioka M, Namikawa K, Horiuchi Y, Nakashima H, Fujisaki J. Current status and future perspective of artificial intelligence applications in endoscopic diagnosis and management of gastric cancer. Dig Endosc. 2021;33:263-272. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 39] [Cited by in RCA: 27] [Article Influence: 5.4] [Reference Citation Analysis (0)] |

| 43. | Deng Y, Qin HY, Zhou YY, Liu HH, Jiang Y, Liu JP, Bao J. Artificial intelligence applications in pathological diagnosis of gastric cancer. Heliyon. 2022;8:e12431. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 8] [Reference Citation Analysis (0)] |

| 44. | Cabral BP, Braga LAM, Syed-Abdul S, Mota FB. Future of Artificial Intelligence Applications in Cancer Care: A Global Cross-Sectional Survey of Researchers. Curr Oncol. 2023;30:3432-3446. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 12] [Cited by in RCA: 21] [Article Influence: 7.0] [Reference Citation Analysis (0)] |

| 45. | Gao Y, Wen P, Liu Y, Sun Y, Qian H, Zhang X, Peng H, Gao Y, Li C, Gu Z, Zeng H, Hong Z, Wang W, Yan R, Hu Z, Fu H. Application of artificial intelligence in the diagnosis of malignant digestive tract tumors: focusing on opportunities and challenges in endoscopy and pathology. J Transl Med. 2025;23:412. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 19] [Cited by in RCA: 12] [Article Influence: 12.0] [Reference Citation Analysis (0)] |

| 46. | Cocca S, Pontillo G, Grande G, Conigliaro R. Artificial intelligence in detection of small bowel lesions and their bleeding risk: A new step forward. World J Gastroenterol. 2024;30:2482-2484. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 2] [Reference Citation Analysis (0)] |

| 47. | Popa SL, Stancu B, Ismaiel A, Turtoi DC, Brata VD, Duse TA, Bolchis R, Padureanu AM, Dita MO, Bashimov A, Incze V, Pinna E, Grad S, Pop AV, Dumitrascu DI, Munteanu MA, Surdea-Blaga T, Mihaileanu FV. Enteroscopy versus Video Capsule Endoscopy for Automatic Diagnosis of Small Bowel Disorders-A Comparative Analysis of Artificial Intelligence Applications. Biomedicines. 2023;11:2991. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 1] [Reference Citation Analysis (0)] |

| 48. | Parikh M, Tejaswi S, Girotra T, Chopra S, Ramai D, Tabibian JH, Jagannath S, Ofosu A, Barakat MT, Mishra R, Girotra M. Use of Artificial Intelligence in Lower Gastrointestinal and Small Bowel Disorders: An Update Beyond Polyp Detection. J Clin Gastroenterol. 2025;59:121-128. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 4] [Article Influence: 4.0] [Reference Citation Analysis (3)] |

| 49. | Singh P, Chakurkar P. Deep learning based Wireless Capsule Endoscopy for Small Intestinal Lesions Detection and personalized treatment pathways. Proceedings of the 2023 14th International Conference on Computing Communication and Networking Technologies (ICCCNT); 2023 Jul 6-8; Delhi, India. IEEE, 2023: 1-8. |

| 50. | Klang E, Grinman A, Soffer S, Margalit Yehuda R, Barzilay O, Amitai MM, Konen E, Ben-Horin S, Eliakim R, Barash Y, Kopylov U. Automated Detection of Crohn's Disease Intestinal Strictures on Capsule Endoscopy Images Using Deep Neural Networks. J Crohns Colitis. 2021;15:749-756. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 91] [Cited by in RCA: 70] [Article Influence: 14.0] [Reference Citation Analysis (3)] |

| 51. | Spada C, Piccirelli S, Hassan C, Ferrari C, Toth E, González-Suárez B, Keuchel M, McAlindon M, Finta Á, Rosztóczy A, Dray X, Salvi D, Riccioni ME, Benamouzig R, Chattree A, Humphries A, Saurin JC, Despott EJ, Murino A, Johansson GW, Giordano A, Baltes P, Sidhu R, Szalai M, Helle K, Nemeth A, Nowak T, Lin R, Costamagna G. AI-assisted capsule endoscopy reading in suspected small bowel bleeding: a multicentre prospective study. Lancet Digit Health. 2024;6:e345-e353. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 45] [Cited by in RCA: 35] [Article Influence: 17.5] [Reference Citation Analysis (1)] |

| 52. | Guerrero Vinsard D, Fetzer JR, Agrawal U, Singh J, Damani DN, Sivasubramaniam P, Poigai Arunachalam S, Leggett CL, Raffals LE, Coelho-Prabhu N. Development of an artificial intelligence tool for detecting colorectal lesions in inflammatory bowel disease. iGIE. 2023;2:91-101.e6. [RCA] [DOI] [Full Text] [Full Text (PDF)] [Cited by in Crossref: 31] [Cited by in RCA: 27] [Article Influence: 9.0] [Reference Citation Analysis (0)] |

| 53. | Ribeiro T, Mascarenhas M, Afonso J, Cardoso H, Andrade AP, Lopes S, Mascarenhas Saraiva M, Ferreira J, Macedo G. P156 A multicentric study on the development and application of a deep learning algorithm for automatic detection of ulcers and erosions in the novel PillCam™ Crohn’s capsule. J Crohns Colitis. 2022;16:i232-i234. [DOI] [Full Text] |

| 54. | Hassan C, Bisschops R, Sharma P, Mori Y. Colon Cancer Screening, Surveillance, and Treatment: Novel Artificial Intelligence Driving Strategies in the Management of Colon Lesions. Gastroenterology. 2025;169:444-455. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 1] [Cited by in RCA: 7] [Article Influence: 7.0] [Reference Citation Analysis (1)] |

| 55. | Zhang H, Wu Q, Sun J, Wang J, Zhou L, Cai W, Zou D. A computer-aided system improves the performance of endoscopists in detecting colorectal polyps: a multi-center, randomized controlled trial. Front Med (Lausanne). 2023;10:1341259. [RCA] [PubMed] [DOI] [Full Text] [Cited by in RCA: 4] [Reference Citation Analysis (1)] |

| 56. | Kikuchi R, Okamoto K, Ozawa T, Shibata J, Ishihara S, Tada T. Endoscopic Artificial Intelligence for Image Analysis in Gastrointestinal Neoplasms. Digestion. 2024;105:419-435. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 18] [Cited by in RCA: 12] [Article Influence: 6.0] [Reference Citation Analysis (0)] |

| 57. | Chittleborough T, Tapper R, Eglinton T, Frizelle F. Anal squamous intraepithelial lesions: an update and proposed management algorithm. Tech Coloproctol. 2020;24:95-103. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 6] [Cited by in RCA: 14] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 58. | Zhou N, Yuan X, Liu W, Luo Q, Liu R, Hu B. Artificial intelligence in endoscopic diagnosis of esophageal squamous cell carcinoma and precancerous lesions. Chin Med J (Engl). 2025;138:1387-1398. [RCA] [PubMed] [DOI] [Full Text] [Cited by in Crossref: 3] [Cited by in RCA: 2] [Article Influence: 2.0] [Reference Citation Analysis (0)] |

| 59. | Mcgowan M, Otott P. Preventing cervical cancer through human papillomavirus vaccination and cervical screening programmes. Obstet Gynaecol Reprod Med. 2024;34:29-32. [DOI] [Full Text] |

| 60. | Gong EJ, Bang CS. Edge Artificial Intelligence Device in Real-Time Endoscopy for the Classification of Colonic Neoplasms. Diagnostics (Basel). 2025;15:1478. [RCA] [PubMed] [DOI] [Full Text] [Full Text (PDF)] [Cited by in RCA: 4] [Reference Citation Analysis (1)] |

| 61. | Boem A, Turchet L. Selection as Tapping: An evaluation of 3D input techniques for timing tasks in musical Virtual Reality. Int J Hum-Comput St. 2024;185:103231. [DOI] [Full Text] |